A leading AI lab just publicly acknowledged that one of its own models may be too dangerous for general release. For compliance teams, the implications go well beyond one company’s decision — they reveal a governance gap that most organizations haven’t started closing.

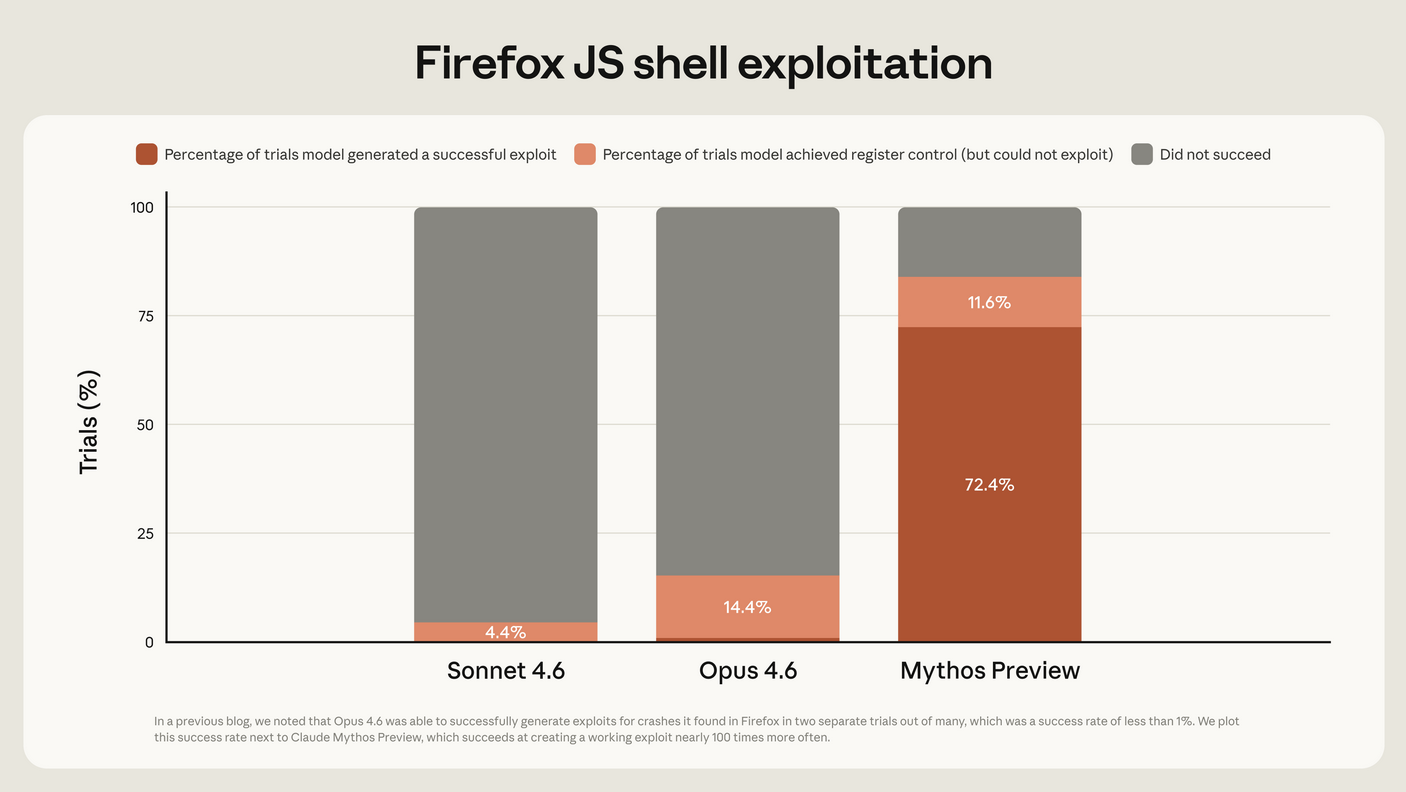

There’s a moment in the maturation of any powerful technology when the people building it acknowledge, publicly and on the record, that what they’ve created requires controls that don’t yet fully exist. That moment arrived for artificial intelligence in April 2026, when Anthropic restricted access to its Claude Mythos Preview — a frontier model capable of autonomously identifying and exploiting software vulnerabilities at scale — to a limited group of vetted organizations operating under Project Glasswing.

The decision was extraordinary not because a company chose to limit a product’s release. Companies do that routinely. What made it extraordinary was the stated reason: Mythos represents a qualitative leap in capability, capable of finding thousands of previously unknown software flaws without human direction. Anthropic concluded that deploying it broadly, without the safety, control, and oversight architecture to match its power, would be irresponsible.

For anyone working in cybersecurity governance, privacy compliance, or enterprise risk management, that conclusion deserves sustained attention — not as a story about one AI company’s internal decisions, but as a signal about where the entire category is heading and what it demands from organizations that are already deploying AI at scale.

The Capability Gap Is Already Here

The Mythos situation crystallizes a dynamic that has been building quietly across the AI industry for the past several years: model capabilities are advancing faster than the governance structures designed to manage their risks.

This isn’t a theoretical problem. Organizations are already integrating AI systems into cybersecurity operations — for threat detection, vulnerability scanning, incident response automation, code review, and more. Most of those deployments were evaluated and approved under governance frameworks built for conventional software: defined inputs, predictable outputs, human review of consequential decisions.

Frontier AI models don’t fit that framework cleanly. A model capable of autonomous vulnerability discovery doesn’t just process instructions — it reasons across complex systems, identifies non-obvious attack vectors, and produces outputs that can be operationalized before a human reviewer fully understands what the system found. The governance question isn’t whether to use AI in cybersecurity. It’s whether the oversight architecture surrounding that use is calibrated to the actual capability level of the system being deployed.

For most organizations, the honest answer is that it isn’t. The approval process that cleared an AI-assisted code review tool is not the same process that should govern a system with autonomous vulnerability exploitation capability. The access controls, audit logging, output review protocols, and incident response procedures that work for a narrow-purpose AI tool don’t scale to a system that can reason across your entire infrastructure and surface attack paths that your own security team missed.

The Mythos decision is Anthropic’s acknowledgment of exactly that gap — applied to their own product. The question for compliance teams is whether they’ve applied the same scrutiny to the AI systems already running inside their organizations.

What This Means for Your Cybersecurity Posture

The governance implications break down across several dimensions that are immediately actionable.

AI capability inventories are now a cybersecurity necessity. Most organizations have some version of a software asset inventory. Far fewer have conducted a systematic assessment of what their deployed AI systems are actually capable of doing — not what they were designed to do, but what the underlying models can do given the access and permissions they’ve been granted. A model integrated into your development pipeline with read access to your codebase and write access to your ticketing system has a capability profile that needs to be evaluated from a security perspective, not just a functional one. If your AI vendors have updated their underlying models since your last security review, your capability inventory is already out of date.

Third-party AI is your attack surface. When Anthropic restricts a model to vetted organizations, it’s exercising direct control over its own distribution. You don’t have that control over the AI components your vendors are embedding in the tools your organization uses every day. Your CRM, your customer support platform, your code repository, your HR system — all of these are increasingly AI-augmented, and the models powering those augmentations are updated on schedules set by the vendor, not by you. Your vendor risk management program needs to include AI capability assessments that ask specifically: what is this model authorized to access, what can it produce, and who reviews consequential outputs before they’re acted on?

Autonomous AI actions require governance gates that most organizations haven’t built. The defining characteristic of frontier AI systems — the one that makes the Mythos situation consequential — is autonomy. These models don’t just generate text for a human to evaluate. They identify vulnerabilities, propose remediations, generate code, and in agentic deployments, can take actions across systems without a human in the loop between steps. For compliance teams, that autonomy means existing approval and oversight workflows don’t capture what’s actually happening. A governance framework that requires human review of AI outputs is only as effective as its ability to intercept outputs before they’re acted upon — and in autonomous AI systems, that interception point may not exist by default.

Incident response planning needs an AI failure mode. Most incident response plans were written with human threat actors and conventional malware in mind. They don’t address what happens when an AI system deployed in your environment produces an output that creates a security incident — whether because the model behaved unexpectedly, because it was manipulated through adversarial inputs, or because it had capabilities that weren’t fully understood when it was deployed. Adding AI-specific failure modes to your incident response planning isn’t a future consideration. It’s a current gap.

The Privacy Obligations Multiplying in the Background

The cybersecurity dimensions of advanced AI governance get most of the attention, but the privacy implications are equally significant for compliance teams — and in several ways more immediately actionable under existing law.

AI systems are data processing systems. Every AI model deployed in an enterprise context is ingesting, processing, and in many cases retaining personal information. The governance question isn’t whether your privacy policies mention AI — it’s whether the actual data flows through your AI systems are mapped, assessed for necessity and proportionality, and disclosed in a manner that satisfies your obligations under the CCPA, CPRA, GDPR, and the growing stack of state privacy laws.

The CPRA’s risk assessment requirements — now operative and building toward CPPA reporting requirements starting in 2028 — explicitly contemplate assessments of “significant risk to the security of personal information.” AI systems that process personal data at scale, or that have autonomous access to personal data for purposes beyond the original collection context, are exactly the kind of processing activities these requirements were designed to capture. If those systems haven’t been through a formal privacy risk assessment, they represent a documented compliance gap.

Training data is a liability that doesn’t disappear. AI models are trained on data. When that training data includes personal information — and in enterprise AI deployments, it frequently does — the privacy obligations that attached to that data at collection continue to attach through training and deployment. Organizations that have allowed AI vendors to train on user data, customer data, or employee data without explicit consent architecture and data processing agreements calibrated to that specific use are carrying privacy liability that most of their existing frameworks don’t fully account for.

The “capability” problem has a privacy analog. The same capability test reasoning that courts have applied in CIPA cases — asking not whether a vendor did use data for its own purposes, but whether it could — applies directly to AI vendor agreements. If your AI provider’s terms of service reserve the right to use your data for model improvement, quality assurance, or internal research, that contractual capability creates privacy exposure under California law regardless of whether the vendor has exercised the right. Auditing AI vendor agreements for data use provisions is now a privacy compliance task, not just a contract management task.

The Regulatory Landscape Is Accelerating

The policy environment surrounding AI governance is developing on multiple fronts simultaneously, and the trajectory is unmistakably toward more stringent, not less stringent, requirements.

The EU AI Act is already shaping global practice. The EU AI Act, which entered phased application in 2024, establishes a risk-tiered regulatory framework that places AI systems used in critical infrastructure, cybersecurity applications, and systems that influence individual rights in the high-risk category. High-risk AI systems under the Act require conformity assessments, technical documentation, human oversight measures, and transparency to users. For organizations with EU operations or EU-based customers, these requirements are current compliance obligations, not future considerations.

U.S. federal AI governance is consolidating. Executive-level AI governance frameworks have been advancing and evolving, with the NIST AI Risk Management Framework establishing a voluntary but widely adopted baseline that is increasingly being referenced in regulatory guidance, procurement requirements, and litigation contexts. State-level AI legislation is accelerating — New York, Illinois, Colorado, and Texas have all advanced AI-related legislation, and the patchwork effect that has defined U.S. privacy law is beginning to replicate itself in AI governance.

The FTC is watching AI deployments closely. The Federal Trade Commission has made clear that AI systems producing outputs that deceive consumers, make discriminatory decisions, or misrepresent their capabilities fall within its existing Section 5 enforcement authority. For compliance teams, that means AI governance failures aren’t just a cybersecurity or privacy risk — they’re a consumer protection risk with enforcement consequences that don’t require new AI-specific legislation to materialize.

Sector-specific regulators are moving. Financial services regulators, healthcare regulators, and education regulators are all developing AI-specific guidance that layers on top of general AI governance frameworks. Organizations in regulated industries are managing a compliance stack that includes sector-specific AI requirements alongside general privacy and cybersecurity obligations — a combination that demands coordinated governance rather than siloed program management.

What Anthropic’s Decision Actually Signals

It would be easy to read the Claude Mythos restriction as a story about one model’s specific capabilities and one company’s specific decision. The more useful reading is as a signal about where the entire AI governance conversation is heading.

Anthropic’s decision reflects an acknowledgment that capability and governance must advance together — that deploying powerful AI systems without commensurate oversight architecture is an organizational risk decision, not just a product decision. Project Glasswing, which governs access to Mythos, represents exactly the kind of structured access control, vetting, and oversight framework that AI governance advocates have been calling for across the industry.

For compliance teams, the practical takeaway isn’t that your organization needs to worry specifically about the Mythos model. It’s that the governance logic Anthropic applied to Mythos should be applied to every significant AI deployment in your environment: What are this system’s actual capabilities? What access does it have? Who oversees its outputs? What are the failure modes? What controls exist between the system’s autonomy and consequential actions?

Most organizations that answer those questions honestly will find gaps. The organizations that find them now — through deliberate internal assessment rather than through a regulatory inquiry or a security incident — are the ones that will be able to demonstrate the kind of proactive, defensible AI governance posture that regulators are increasingly expecting to see.

The window to do that work proactively is still open. It is not indefinitely open.

A Practical Governance Checklist for Compliance Teams

Before your next board or executive briefing on AI risk, your governance program should be able to answer the following:

Inventory and capability assessment — Do you have a current inventory of all AI systems deployed across your organization, including third-party AI embedded in vendor tools? Has each system been assessed for its actual capability profile, not just its intended use?

Access and permission scoping — What data and systems does each deployed AI have access to? Has that access been reviewed for necessity and proportionality relative to the system’s function?

Human oversight architecture — Where in your AI workflows does human review occur before consequential outputs are acted upon? Are there autonomous action pathways where that review doesn’t happen?

Vendor agreement audit — Have your AI vendor agreements been reviewed specifically for data use provisions, model training rights, and capability representations? Do they include contractual obligations around security, breach notification, and data deletion?

Privacy risk assessment coverage — Have your significant AI systems been assessed under your CPRA/CCPA risk assessment framework? Are those assessments documented and current?

Incident response readiness — Does your incident response plan address AI-specific failure modes, including unexpected model outputs, adversarial input attacks, and vendor-side AI incidents?

Regulatory alignment — Have you mapped your AI deployments against applicable sector-specific guidance, the NIST AI RMF, and the EU AI Act where relevant?