Democrats Build Secret Coordination Network to Counter AI Industry Influence at State Level

As artificial intelligence continues to reshape industries and spark intense regulatory debates, a small but influential group of Democratic state lawmakers has created a private backchannel to align their efforts and push back against heavy lobbying from tech giants. New York State Assembly Member Alex Bores, who is running for Congress in New York’s 12th […]

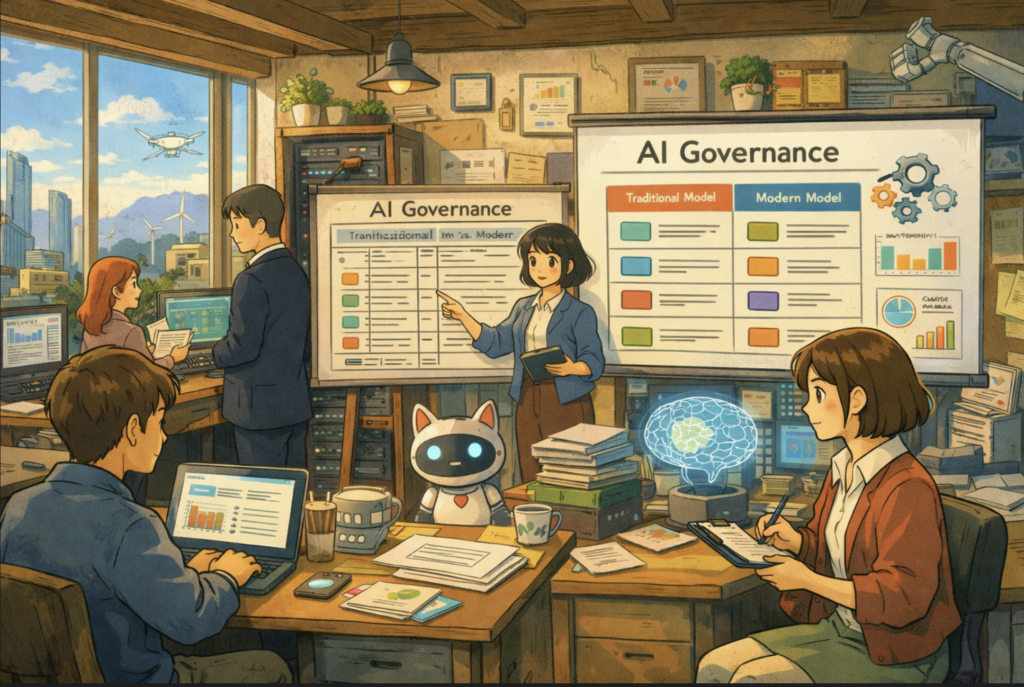

Colorado’s AI Law Do-Over: What the Revision Reveals About Where Regulation Is Heading

Colorado Just Rewrote Its AI Law. Here’s What Actually Changed. Colorado has been trying to get this right for a while. After a high-profile legislative near-miss in 2024 — when Governor Jared Polis signed SB 205 into law and then almost immediately called for its revision — the state’s AI Policy Workgroup has come back […]

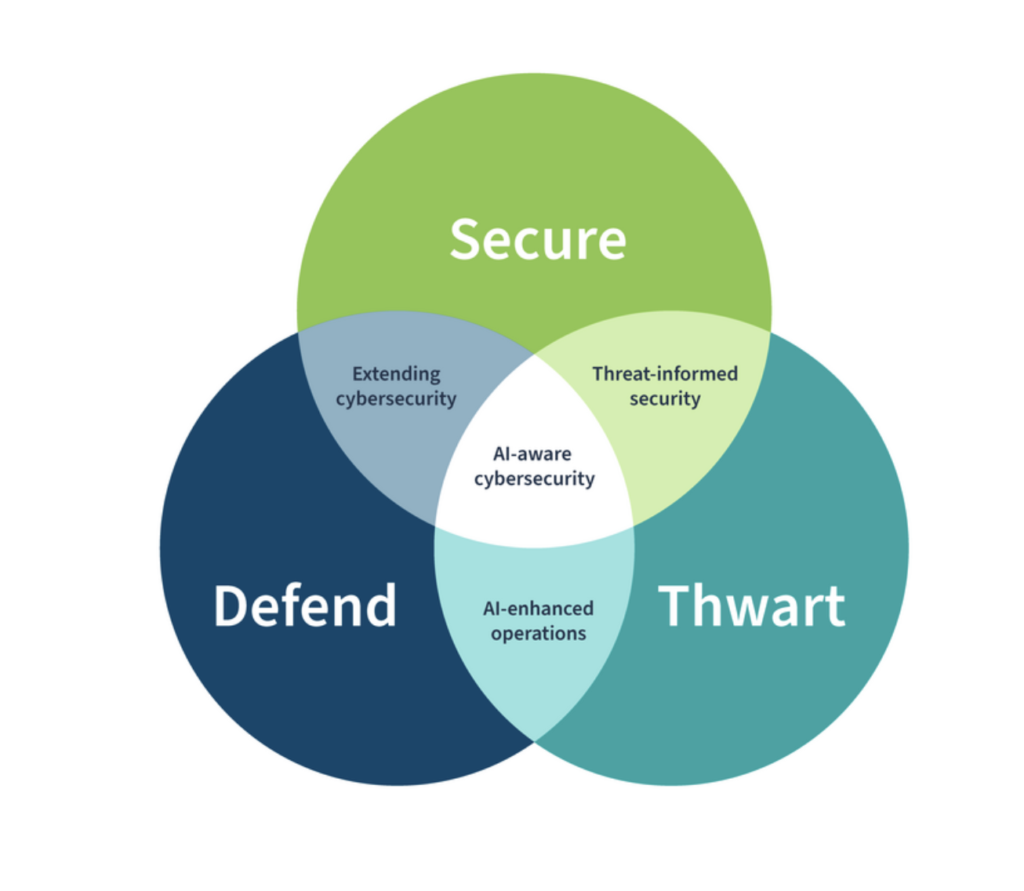

NIST Report Spotlights Key Obstacles in Monitoring Deployed AI Systems Amid Broader Cybersecurity and Privacy Efforts

The National Institute of Standards and Technology (NIST), an agency within the U.S. Department of Commerce, has released a significant new publication titled NIST AI 800-4: Challenges to the Monitoring of Deployed AI Systems. This report delves into the practical difficulties organizations encounter when attempting to oversee artificial intelligence systems in live, real-world environments. As […]

The Courts May Write America’s First Real AI Safety Rules

Artificial intelligence regulation in the United States has been stuck in legislative limbo for nearly three years. Congress debates frameworks, states experiment with their own rules, and federal agencies issue guidance—but none of these efforts has produced a comprehensive national standard for AI safety. Increasingly, however, it may not be lawmakers who shape the first […]

The Consent Trap: Why Asking Kids to Opt Out of AI Is Already Too Late

Every major privacy framework built in the last two decades shares a foundational assumption: that people, when given adequate notice and a meaningful choice, can protect themselves. Read the disclosure. Click the button. Manage your preferences. The model is tidy, legally defensible, and built around a vision of the individual as an autonomous agent capable […]

AI Privacy Issues: Real Legal Examples of the Risks Artificial Intelligence Poses to Your Data

Artificial intelligence is no longer a distant technological concept. It is embedded in our smartphones, our hospitals, our courtrooms, our financial systems, and our most intimate conversations. Yet as AI becomes ubiquitous, it carries with it a constellation of serious, often misunderstood privacy risks that have begun materializing in legal cases, regulatory enforcement actions, and […]

61 Privacy Regulators vs. Synthetic Reality Warning on AI-Generated Imagery

A coalition of 61 privacy and data protection authorities released a joint statement that reads less like a policy memo and more like a coordinated enforcement signal. The target is not “AI” in the abstract. It’s a specific class of harm that has accelerated from niche abuse to mainstream risk: AI systems that can generate […]

Your AI Just Bought Something. Now What? The Legal and Privacy Minefield of Agentic Commerce

Picture this: You wake up on a Tuesday morning, check your email, and find three order confirmation receipts for items you have no memory of buying. Your AI shopping agent — the one you set up last month and promptly forgot about — has been busy overnight. It found a sale, matched your previous preferences, […]

The Diplomat Taking a Wrecking Ball to Europe’s Internet Rules: Who Is Sarah B. Rogers and Why Does She Matter to Privacy Professionals?

She is not a privacy professional in any conventional sense of the term. She has never chaired a data protection authority, drafted a consent framework, or presented at an IAPP conference. But if you work in privacy compliance, data governance, or digital rights, Sarah B. Rogers is a consequential figure operating in our world and […]

Algorithmic Accountability: Inside Attorney General Tong’s Sweeping AI Enforcement Blueprint for Connecticut

Artificial intelligence is no longer an abstract frontier technology. It is underwriting mortgages, screening tenants, filtering job applicants, approving insurance claims, determining creditworthiness, curating advertisements, and influencing how children interact online. On February 25, 2026, Connecticut Attorney General William Tong issued a sweeping memorandum clarifying a simple but powerful point: existing laws already apply to […]