The MIT AI Risk Navigator (airi-navigator.com) is a groundbreaking interactive web tool that unifies multiple datasets from the MIT AI Risk Initiative (AIRI) into one accessible platform. Launched in early 2026, it allows users to explore over 1,700 documented AI risks, real-world incidents, governance responses, and mitigation strategies through a shared taxonomy.

Developed as a project of the MIT AI Risk Initiative at MIT FutureTech, with support from the Cambridge Boston Alignment Initiative (CBAI), the Navigator bridges previously siloed resources. It transforms fragmented AI risk research into actionable intelligence for researchers, policymakers, compliance officers, auditors, developers, and executives.

Foundation: The MIT AI Risk Repository

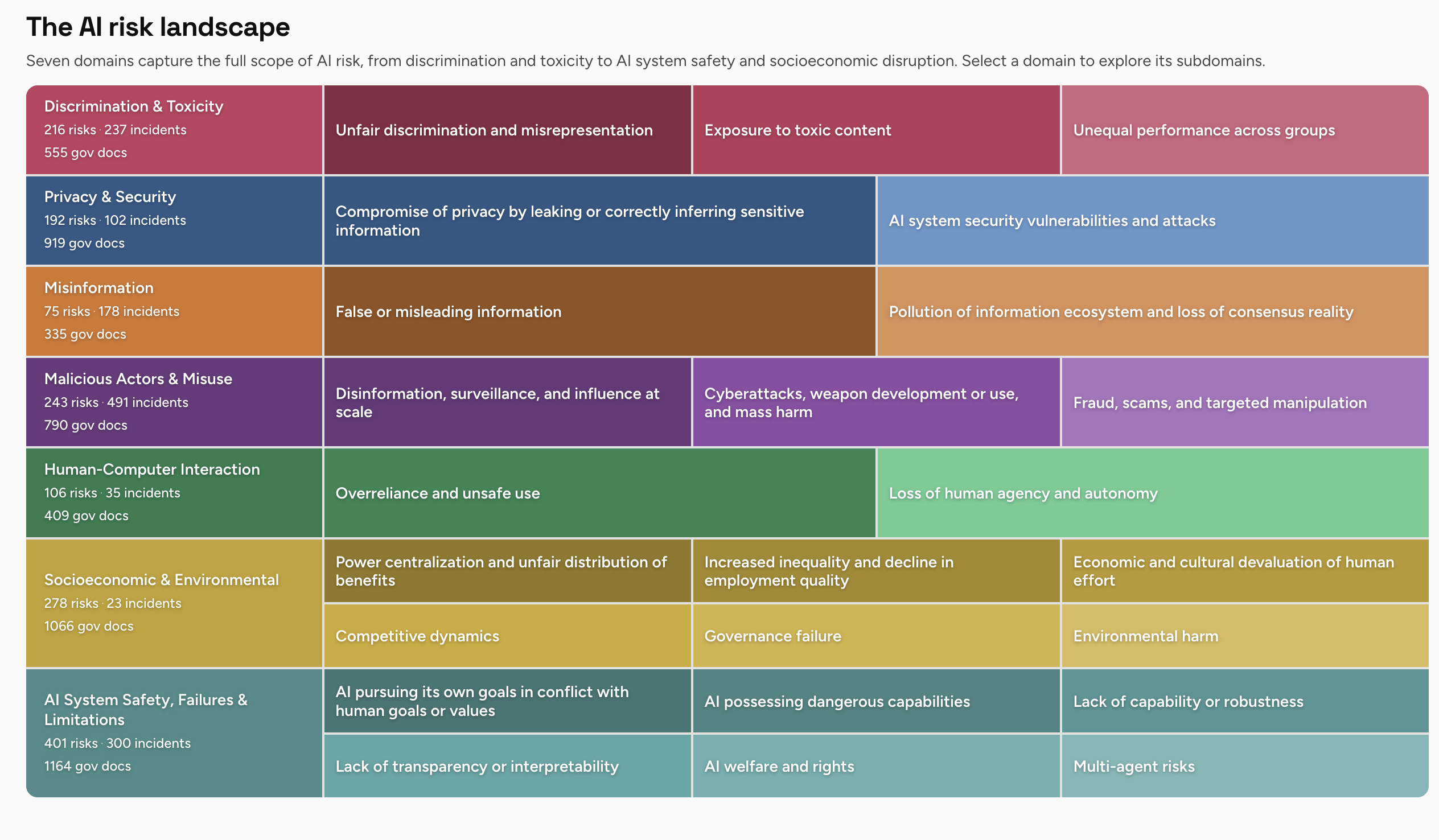

The Navigator builds directly on the MIT AI Risk Repository, a living database that has cataloged more than 1,700 AI risks extracted from 74+ frameworks and classifications. This repository includes three core elements:

- AI Risk Database: Detailed entries with sources, quotes, and references.

- Causal Taxonomy: Classifies risks by entity (Human, AI, Other), intent (Intentional/Unintentional), and timing (Pre/Post-deployment).

- Domain Taxonomy: Organizes risks into 7 high-level domains and 24 subdomains.

This meta-framework enables systematic comparison, gap analysis, and evidence-based decision-making.

The 7 Core AI Risk Domains

The Navigator’s visual interface lets users click into domains for integrated views of risks, incidents, governance coverage, and mitigations. Here is a detailed breakdown:

1. Discrimination & Toxicity

Includes unfair discrimination and misrepresentation, exposure to toxic content (hate speech, violence, CSAM, extremism), and unequal performance across demographic groups. These risks arise from biased training data and design choices that amplify societal harms.

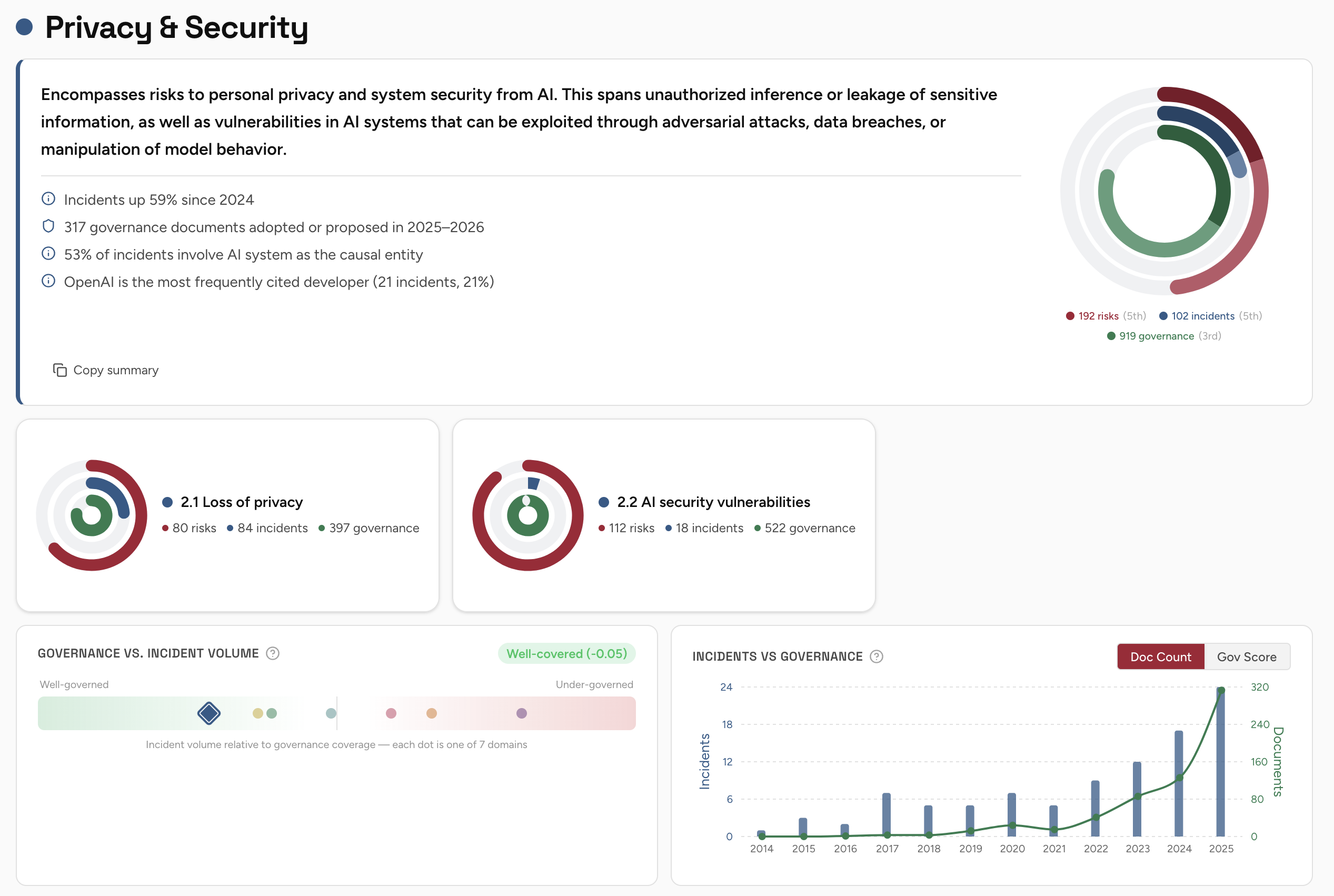

2. Privacy & Security

Covers compromise of privacy through data leaks or inference of sensitive information, plus AI system vulnerabilities and attacks that lead to breaches or manipulation.

3. Misinformation

Encompasses generation and spread of false information, plus pollution of the information ecosystem leading to filter bubbles and erosion of shared reality.

4. Malicious Actors & Misuse

Addresses disinformation campaigns, large-scale surveillance, cyberattacks, weapon development, fraud, scams, and targeted manipulation using AI tools.

5. Human-Computer Interaction

Focuses on overreliance, unsafe use, anthropomorphization of AI, and loss of human agency and autonomy.

6. Socioeconomic & Environmental

Includes power centralization, increased inequality, devaluation of human effort, competitive AI arms races, governance failures, and environmental impacts from energy-intensive training and hardware.

7. AI System Safety, Failures & Limitations

Covers goal misalignments, dangerous capabilities (deception, self-proliferation), lack of robustness, transparency issues, multi-agent risks, and even emerging questions around AI welfare and rights.

Key Interactive Features

The platform excels with practical tools:

- Recent Incidents: Pulled from the AI Incident Database, with examples like AI-generated sepsis alerts leading to inappropriate medical treatment (Feb 2026), GPS failures stranding delivery vans, viral deepfake videos sparking IP complaints, and AI coding agents publishing accusatory content.

- Governance Responses: Tracks hard laws (e.g., EU AI Act covering 21 risk areas), soft law (NIST AI RMF), and national strategies. Users see coverage gaps across domains.

- Mitigations: Actionable strategies such as red teaming, AI watermarking, whistleblower protections, and content safety controls drawn from leading frameworks.

- Search, Filters & Visualizations: Global search, interactive charts showing risk distribution, incident timelines, and governance mapping.

Development and Technical Details

Created by Spencer Michaels during a Spring 2026 research fellowship at CBAI, the tool uses modern web technologies: React, Tailwind CSS, Nivo/Recharts for charts, and Upstash for search. As of late April 2026, it is at version 1.2.7 with ongoing updates. The Navigator makes complex datasets intuitive, turning abstract risks into explorable patterns.

Why the MIT AI Risk Navigator Matters

AI development is advancing rapidly, yet risk understanding and governance often lag. Traditional approaches treat risks in isolation; the Navigator connects them. Users can answer critical questions such as:

- Which real-world incidents align with theoretical risks?

- Do current regulations adequately cover high-priority expert concerns?

- Where are the biggest gaps in mitigation strategies?

For enterprises, it supports AI risk assessments, compliance with frameworks like the EU AI Act, and internal auditing. Policymakers gain evidence for balanced regulation. Researchers benefit from a centralized reference that accelerates discovery and synthesis.

The tool highlights systemic challenges: many risks stem from human choices in design and deployment, while others emerge from AI capabilities themselves. It also surfaces under-addressed areas like multi-agent risks and environmental impacts, which are gaining urgency as models scale.

By making AI risk management more transparent and structured, the Navigator promotes proactive rather than reactive governance. It encourages organizations to move beyond checkbox compliance toward genuine safety and responsibility.

Getting Started and Future Outlook

Visit https://www.airi-navigator.com/ to explore interactively. Pair it with the raw AI Risk Repository for downloadable spreadsheets and deeper analysis. The platform will continue evolving with new incidents, frameworks, and features.

In an era where AI touches nearly every sector — from healthcare and finance to education and national security — tools like the MIT AI Risk Navigator are essential infrastructure. They help ensure that powerful technology serves humanity’s best interests rather than amplifying its worst vulnerabilities.

This comprehensive resource represents a significant milestone in turning AI safety discourse from scattered warnings into structured, evidence-based action. Whether you are building AI systems, regulating them, or simply seeking to understand their implications, the Navigator provides the clearest map available today of both the dangers and the paths toward safer deployment.