The rapid evolution of artificial intelligence is transforming organizational operations, but it is also introducing profound shifts in cybersecurity landscapes. The National Institute of Standards and Technology (NIST) released a preliminary draft of new guidelines designed to help organizations navigate these changes. Titled the Cybersecurity Framework Profile for Artificial Intelligence (NIST IR 8596, commonly referred to as the Cyber AI Profile), the document applies NIST’s widely used Cybersecurity Framework (CSF 2.0) to AI-specific risks and opportunities.

The rapid evolution of artificial intelligence is transforming organizational operations, but it is also introducing profound shifts in cybersecurity landscapes. The National Institute of Standards and Technology (NIST) released a preliminary draft of new guidelines designed to help organizations navigate these changes. Titled the Cybersecurity Framework Profile for Artificial Intelligence (NIST IR 8596, commonly referred to as the Cyber AI Profile), the document applies NIST’s widely used Cybersecurity Framework (CSF 2.0) to AI-specific risks and opportunities.

“Regardless of where organizations are on their AI journey, they need cybersecurity strategies that acknowledge the realities of AI’s advancement,” said Barbara Cuthill, a NIST cybersecurity expert and co-author of the profile.

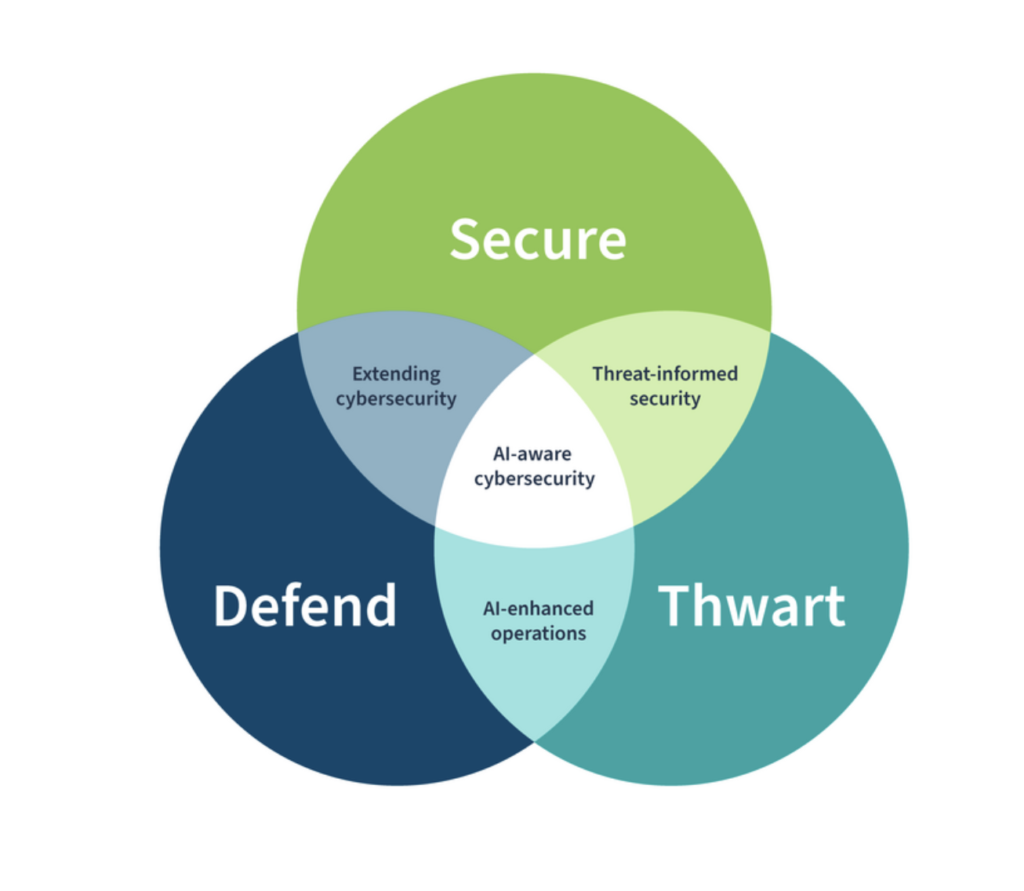

A Three-Pronged Approach to AI and Cybersecurity

The Cyber AI Profile centers on three overlapping focus areas, reflecting the dual-edged nature of AI in cybersecurity: it can be a target, a tool for defense, and a weapon in the hands of adversaries.

1. Securing AI Systems

This focus area addresses the cybersecurity challenges of integrating AI into organizational infrastructure, including models, training data, and supply chains. AI systems often exhibit opaque, dynamic behavior that is harder to predict or verify than traditional software. Vulnerabilities can be inherent, stemming from training data or model architecture.

Key risks include:

- Data poisoning: Malicious alterations to training datasets that compromise model integrity.

- Adversarial examples: Crafted inputs designed to mislead AI outputs, such as evading detection in security scanners.

- Supply chain attacks: Compromises in third-party data, models, or tools, including issues with data provenance.

The profile prioritizes CSF 2.0 outcomes like asset inventory (identifying AI components), access controls (least privilege for AI agents), data protection (preventing leakage during inference), and continuous monitoring for anomalous behaviors. Recommendations include maintaining provenance records for datasets, sandboxing AI agents, and backing up validated models to enable recovery from poisoning.

2. Conducting AI-Enabled Cyber Defense

Here, the guidelines highlight opportunities to leverage AI for strengthening cybersecurity operations, while acknowledging associated challenges. AI can process vast amounts of alerts, prioritize threats, correlate intelligence, and even automate parts of incident response—reducing false positives and enabling proactive defenses.

Examples of benefits include:

- Using AI for anomaly detection in networks or user behavior.

- Automating threat intelligence sharing via standards like STIX.

- Predictive analytics for vulnerability prioritization.

However, risks such as model drift (degradation over time), overreliance on AI outputs, or excessive autonomy in AI agents (e.g., executing code without oversight) must be managed. The profile emphasizes human-in-the-loop checks, maturity assessments before deployment, and reserving compute resources for defensive actions during incidents. High-priority CSF mappings include enhanced detection (event correlation) and protective technologies (AI-driven policy enforcement).

3. Thwarting AI-Enabled Cyberattacks

This area focuses on building resilience against threats amplified by AI, where adversaries use the technology for faster, more scalable, and sophisticated attacks.

Prominent risks include:

- AI-generated deepfakes for spear-phishing or social engineering.

- Automated malware obfuscation or polymorphic attacks that evade signatures.

- Autonomous AI agents orchestrating multi-stage exploits.

- Prompt injection or jailbreaking in large language models to bypass safeguards.

The guidelines stress proactive measures like adversarial training for models, zero-trust network segmentation, and monitoring for indicators of AI usage in attacks (e.g., unusually rapid reconnaissance). CSF priorities cover threat identification (hunting for AI-enhanced tactics), detection processes (flagging evasion techniques), and recovery testing against AI-scenario compromises.

Ultimately, the profile notes that every organization will eventually need to address all three areas, as AI adoption grows.

Building on Established Frameworks

The Cyber AI Profile is a “community profile” under CSF 2.0, joining others tailored for sectors like manufacturing and finance. It provides technology-neutral recommendations, with tables mapping AI-specific considerations to CSF’s six core functions: Govern, Identify, Protect, Detect, Respond, and Recover. Priorities (rated 1-3) help organizations allocate resources, and informative references link to resources like the NIST AI Risk Management Framework, OWASP AI security guides, MITRE ATLAS threat matrix, and ENISA reports.

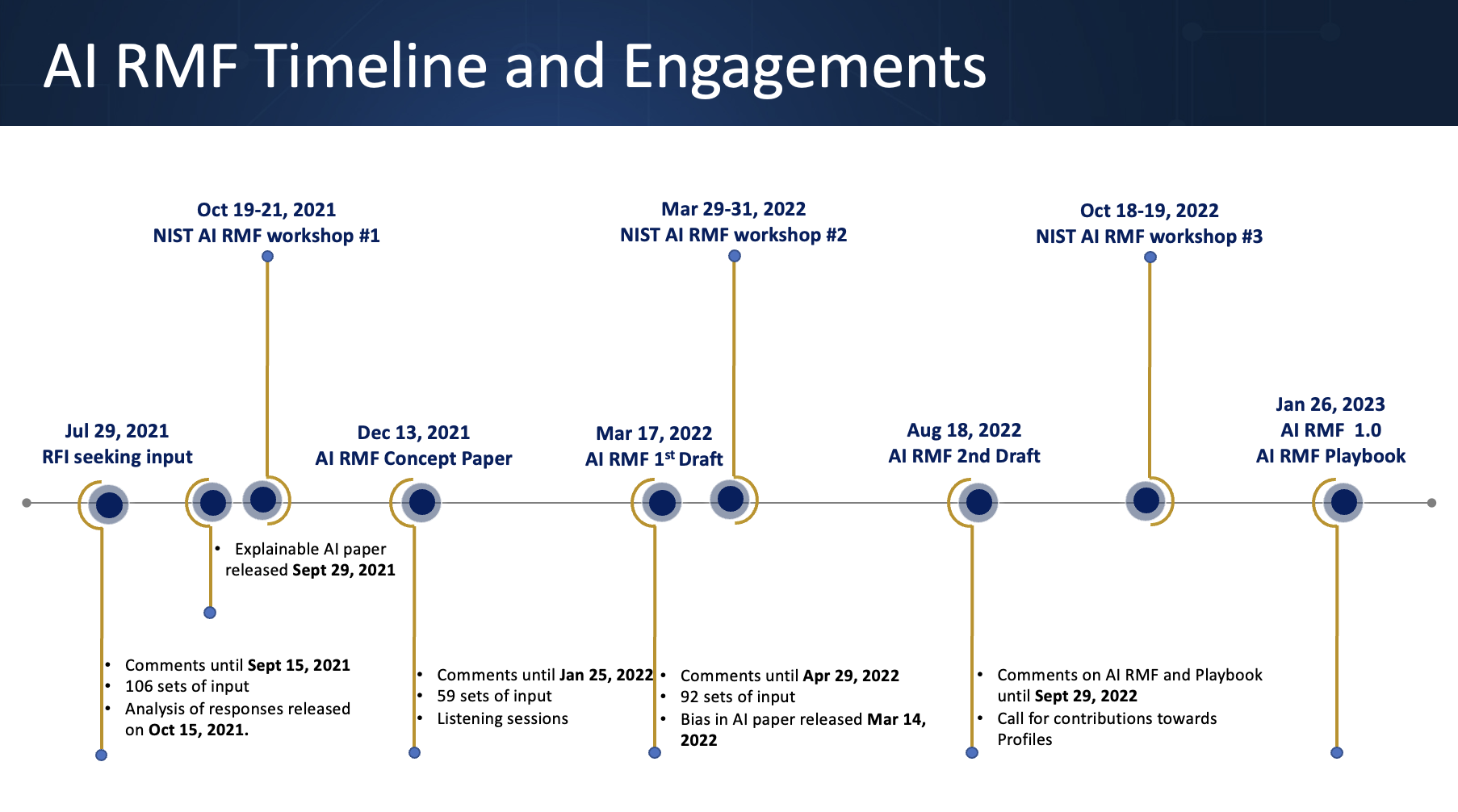

Developed over a year with input from over 6,500 community members—including workshops and virtual sessions—the draft builds on a February 2025 concept paper and aligns with ongoing NIST efforts, such as control overlays for SP 800-53.

Call for Public Input and Next Steps

NIST is seeking public comments on this preliminary draft until January 30, 2026. Stakeholders can submit feedback via a template to cyberaiprofile@nist.gov. A virtual workshop is planned for January 14, 2026, to discuss the document.

When finalized (expected in 2026 after an initial public draft), the profile will serve as a living tool to boost organizational confidence in adopting AI securely.

“The Cyber AI Profile is all about enabling organizations to gain confidence on their AI journey,” Cuthill said. “We hope it will help them feel equipped to have conversations about how their cybersecurity environment will change with AI and to augment what they are already doing with their cybersecurity programs.”

As AI continues to permeate critical systems—from predictive maintenance to threat hunting—these guidelines offer a timely roadmap for balancing innovation with robust defense in an increasingly AI-driven threat landscape. Organizations are encouraged to review the draft and contribute to shaping this evolving standard.

Comparison with Other Key NIST Guidelines

| Guideline | Release Date | Primary Focus | Relation / Key Differences from Cyber AI Profile |

|---|---|---|---|

| Cybersecurity Framework (CSF) 2.0 | February 2024 | General organizational cybersecurity risk management across any technology or sector | Core foundation framework; Cyber AI Profile is a tailored “community profile” that applies CSF 2.0 specifically to AI-related risks and opportunities. |

| AI Risk Management Framework (AI RMF 1.0) | January 2023 | Managing risks to individuals, organizations, and society from AI (including bias, privacy, safety, and trustworthiness) | Complementary but broader; focuses on trustworthy AI lifecycle rather than cybersecurity. Cyber AI Profile references AI RMF extensively for risk management but narrows scope to cybersecurity using CSF structure. |

| SP 800-53 Rev. 5 (with ongoing AI control overlays) | September 2020 (Rev. 5) | Security and privacy controls catalog for federal information systems | Control-level implementation guidance; Cyber AI Profile aligns with planned AI-specific control overlays for SP 800-53, providing higher-level organizational mapping rather than detailed controls. |