The Privacy Reckoning That Agentic AI Cannot Escape

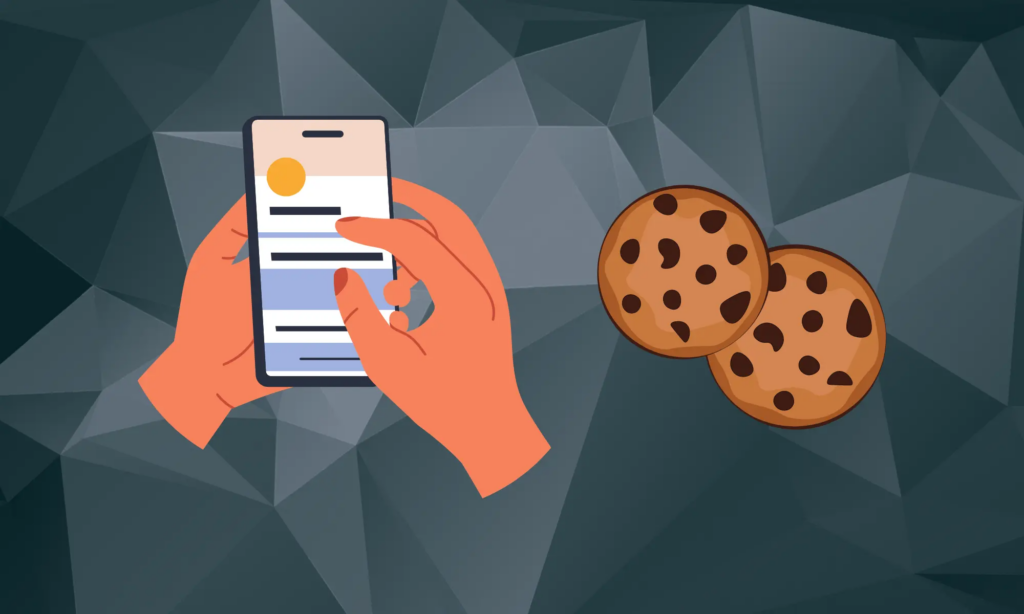

There is a moment in the lifecycle of every transformative technology when the gap between what it can do and what it should do becomes impossible to ignore. For agentic AI, that moment arrived earlier than most people expected — and it arrived, in part, because of a project called OpenClaw. OpenClaw — an open-source, […]

U.S. Treasury Steps In to Help Financial Sector Navigate AI Adoption

The U.S. Department of the Treasury has released two new resources designed to help financial institutions adopt artificial intelligence more safely and consistently, signaling a growing federal appetite for practical, sector-specific AI guidance rather than sweeping top-down regulation. The two resources — an Artificial Intelligence Lexicon and a Financial Services AI Risk Management Framework […]

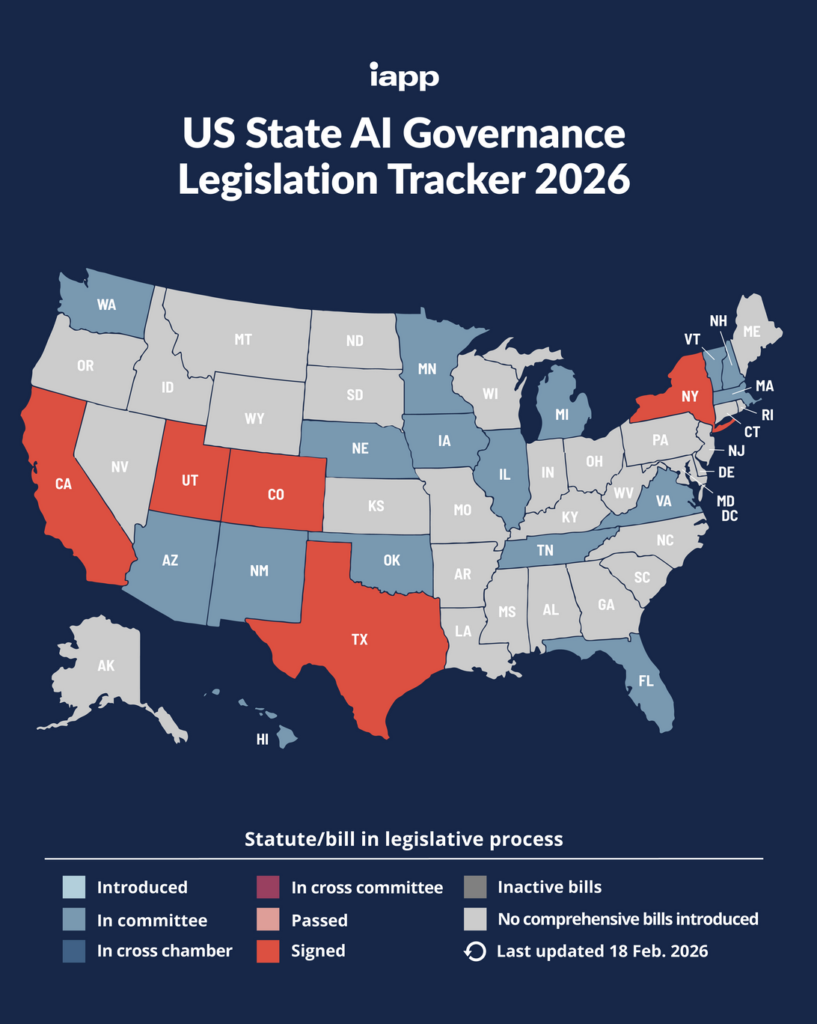

IN-DEPTH ANALYTICAL REPORT U.S. STATE ARTIFICIAL INTELLIGENCE GOVERNANCE LAWS

IN-DEPTH ANALYTICAL REPORT U.S. STATE ARTIFICIAL INTELLIGENCE GOVERNANCE LAWS California • Colorado • Utah • Texas Obligations, Compliance Strategy, Cross-State Conflicts & Enforcement February 2026 | For Legal & Compliance Professionals EXECUTIVE SUMMARY Overview of the U.S. State AI Regulatory Landscape The United States is witnessing an unprecedented wave of state-level artificial intelligence legislation. […]

Brussels Is Rewriting the Clock on High-Risk AI — and Privacy Teams Are Stuck in the Middle

If you’re responsible for AI governance in the EU, you probably circled 2 August 2026 on your calendar a long time ago. That was the date the high-risk provisions of the EU Artificial Intelligence Act were scheduled to apply. Legal teams built roadmaps around it. Product teams worked backward from it. Procurement clauses referenced it. […]

ACSC Releases Quantum Technology Primer on Computing: Essential Risks, Privacy Implications, and Compliance Strategies for the Quantum Era

The Australian Cyber Security Centre (ACSC) today published its latest Quantum Technology Primer: Computing, the second in an important series designed to help organizations navigate the rapidly evolving quantum landscape. Targeted at small and medium businesses, large organizations, critical infrastructure, and government entities, the primer delivers a clear, practical warning: quantum computing is advancing fast, […]

The New Rules of AI Governance: Why Traditional Models Can’t Keep Up

AI has moved from “innovation lab” curiosity to production-grade infrastructure. It drafts and summarizes content, flags fraud, automates customer support, routes claims, optimizes pricing, screens candidates, scores leads, and influences decisions that materially affect people and businesses. But while AI capabilities have accelerated, many governance programs still rely on an older operating model: periodic reviews, […]

When the Platform Is the Pipeline: What the Nvidia-YouTube Lawsuit Tells Us About AI’s Data Problem

There is a phrase that keeps appearing in AI litigation that I think deserves more attention than it usually gets: “without consent.” It shows up in almost every complaint filed against a technology company for using human-generated content to train an AI model, and it appears prominently in the class-action suit against Nvidia filed in […]

AI Governance 2.0: Why Having a Policy Is Not the Same as Being Protected

In 1903, the Wright brothers flew 120 feet at Kitty Hawk. By 1929, commercial aviation was booming and terrifying in equal measure: roughly one fatal accident occurred for every million miles flown. At today’s flight volumes, that rate would produce around 7,000 fatal crashes per year. What changed aviation was not a thicker rulebook. It […]

Your Data, Their Model: Connecticut Grants Consumers New Power Over AI

As the intersection of artificial intelligence and personal privacy becomes a primary battleground for regulators, Connecticut has positioned itself at the forefront of the movement. With the passage and subsequent expansion of its data privacy framework, the state has moved beyond simple consumer protection into the complex realm of machine learning and algorithmic accountability. The […]

Nvidia’s YouTube Scraping Lawsuit Exposes Critical Gaps in AI Training Data Governance

A new class action lawsuit filed against Nvidia Corp. and YouTube Inc. in California’s Northern District Court has thrust the contentious issue of AI training data acquisition back into the spotlight. The complaint alleges that the chipmaker and AI powerhouse scraped YouTube content without authorization to train its Cosmos AI model, marking yet another flashpoint […]