Most Companies Think Their Employees Know How to Handle Personal Data. A Five-Year Global Study Says Otherwise.

The Global Privacy Culture Survey 2025 tracked privacy behavior across thousands of employees worldwide. What it found should concern every business owner: the gap between what your compliance program says and what your employees actually do is wider than you think — and it’s getting worse in the ways that matter most.

There is a version of privacy compliance that looks good on paper. Policies exist. Training has been completed. Someone in the organization has the title of Data Protection Officer or Privacy Officer. Boxes are checked, records are filed, and if a regulator asked for documentation tomorrow, your legal team could produce a folder.

And then there is what actually happens when an employee gets an unusual email from a customer asking about their data. Or when a developer pushes a new feature with a third-party integration that nobody formally reviewed. Or when the marketing team adopts a new analytics tool because it was free and useful and nobody thought to ask whether it needed to be in the records of processing.

Those two versions of compliance — the documented one and the lived one — are not the same thing. The distance between them is where most real privacy risk lives. And according to the most comprehensive longitudinal study of privacy culture conducted anywhere in the world, that distance is growing.

The Global Privacy Culture Survey 2025, published by Privacy Culture Ltd with support from international law firm Dentons, is now in its fifth year of tracking how employees across industries and geographies actually understand, feel about, and behave around privacy obligations. It is not a survey of DPOs or legal teams. It is a survey of the people who actually handle data every day — developers, customer service agents, HR professionals, marketers, salespeople, finance teams — and what it found in 2025 paints a picture that should get the attention of any business owner or executive who believes their training program has the problem solved.

The headline finding can be summarized in five words: I care, but I can’t cope.

What the Survey Actually Measures — and Why It’s Different

Before getting into the findings, it’s worth understanding what this survey is and isn’t. It is not a quiz testing whether employees can recite GDPR definitions or cite regulatory provisions. It measures something more fundamental and more predictive: whether employees understand what’s expected of them in their specific role, feel equipped to act on that understanding, believe their organization takes privacy seriously, and know where to turn when they’re uncertain.

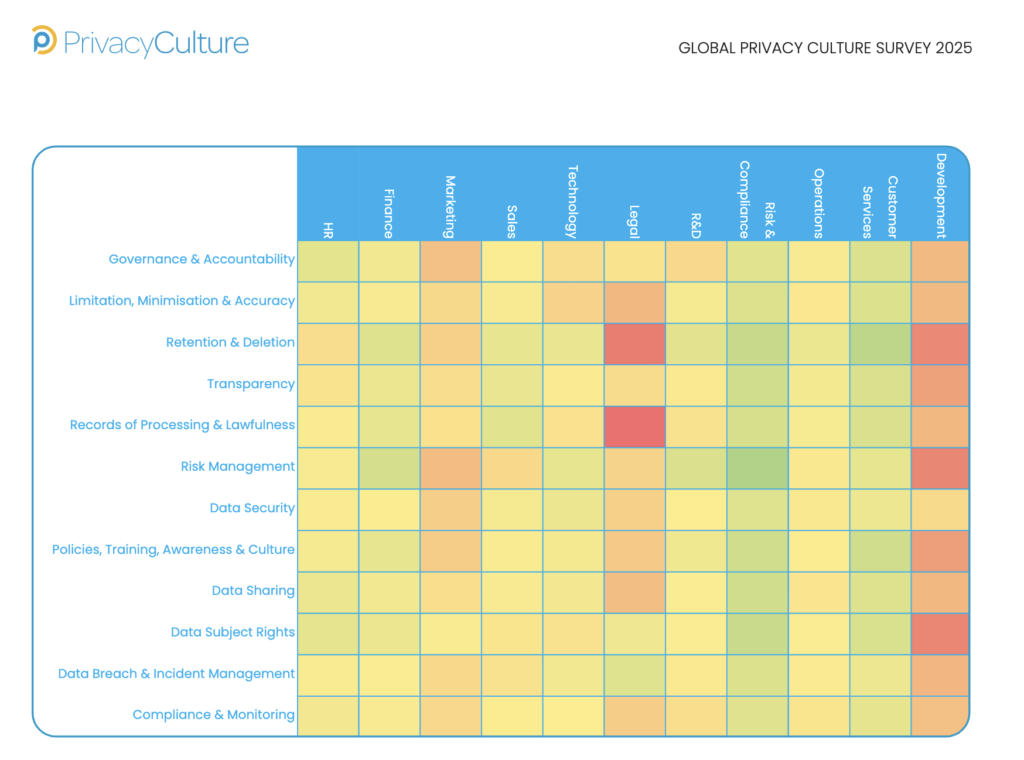

Those four dimensions — Knowledge, Behaviors, Attitudes, and Perceived Control — are what the researchers call Attributes. They form one of three analytical lenses applied to the same dataset of 50 questions across 12 privacy domains. The other lenses are the Domain lens, which shows which specific areas of privacy practice are stronger or weaker, and the Persona lens, which segments the workforce into behavioral groups to help organizations understand not just what the average score is, but who is driving it and where the real risk concentrates.

The 12 domains cover the full lifecycle of how organizations handle personal data: Governance and Accountability, Limitation and Minimization, Retention and Deletion, Transparency, Records of Processing and Lawfulness, Risk Management, Data Security, Policies and Training, Data Sharing, Data Subject Rights, Data Breach and Incident Management, and Compliance and Monitoring.

Five years of consistent methodology means the survey can do something most compliance assessments cannot: show direction of travel. Not just where you are, but whether you’re getting better or worse, and at what rate.

In 2025, the direction of travel on the things that matter most is not encouraging.

The Core Tension: Employees Care More Than Ever. They Feel Less Able to Act Than Ever.

The most striking finding in the 2025 attribute breakdown is not any single number. It is the combination of numbers and what they reveal about the internal experience of privacy compliance for the average employee.

Attitudes toward privacy rose by 0.17. That is the largest positive movement in the attribute data. Employees increasingly believe privacy matters, understand that their organizations expect them to handle data responsibly, and recognize that failures carry real consequences. Five years of regulatory enforcement, high-profile data breaches, and growing public awareness of privacy rights have landed. Employees are not indifferent.

But at the same time, Knowledge fell by 0.07. Perceived Control — the sense of being equipped and empowered to actually act on privacy obligations — fell by 0.14. And Behaviors, the ultimate measure of whether good intentions translate into concrete action, barely moved at all, ticking up just 0.03.

The researchers describe this as the “I care, but I can’t cope” dynamic. It is worth sitting with that phrase for a moment, because it describes something specific and actionable. These are not employees who don’t care about privacy. They are employees who feel that the complexity of what they’re being asked to navigate has outpaced the tools, guidance, and support they’ve been given to navigate it.

Several forces are driving this. The proliferation of SaaS tools means employees encounter data handling decisions that last year’s training never addressed. The rapid expansion of generative AI — and what the report calls “shadow AI,” meaning employees using external AI tools to process work data without any formal privacy review — introduces new scenarios faster than governance frameworks can assess them. Cyber threats have intensified, and with them, anxiety-laden messaging that a single wrong click can trigger a breach, which heightens stress without providing clearer behavioral guidance.

There is also what the researchers identify as a possible learning paradox. As privacy programs mature and training becomes more sophisticated, employees may actually become more aware of the complexity and nuance involved, leading them to assess their own capability more conservatively. Early-stage privacy awareness often produces surface-level confidence: “I’ve done the training, I know what the rules are.” Mature privacy awareness produces humility: “I understand there are edge cases here that I’m not sure I’d handle correctly.” A declining Knowledge score, in some cases, may not mean employees know less. It may mean they now know enough to understand how much they don’t know.

The practical implication of the care-but-can’t-cope pattern is important. Training — more content, more modules, more completion metrics — addresses the Knowledge attribute. But Perceived Control and Behaviors require something different. They require enablement: clearer processes, better tools, simplified escalation pathways, visible leadership that reinforces privacy as a priority rather than a bureaucratic burden. Training tells employees what to do. Enablement gives them the means to actually do it.

If your organization’s response to privacy compliance gaps has been to add more training, the 2025 data suggests you may be solving the wrong problem.

The Domain Picture: Strong Where It’s Easy to Measure, Weak Where It Actually Matters

When the survey breaks results down by domain — the specific areas of privacy practice — a pattern emerges that is both understandable and concerning. Organizations are getting better at the things that generate headlines when they go wrong and worse at the things that quietly accumulate risk until a regulator or a plaintiff’s attorney makes it visible.

The three domains with the strongest positive movement in 2025 are Data Breach and Incident Management, up 0.18; Data Security, up 0.15; and Compliance and Monitoring, up 0.13. Employees feel more confident recognizing incidents, knowing how to report them, and understanding what non-compliance looks like. This is real progress, and it reflects genuine organizational investment driven by board-level attention to cyber risk, high-profile breach enforcement actions, and years of awareness training that has finally embedded.

The three domains with the sharpest declines tell the opposite story. Records of Processing and Lawfulness fell 0.31 — the single largest movement in either direction across the entire dataset. Data Subject Rights fell 0.21. Retention and Deletion fell 0.16.

These are not obscure technical domains. They are foundational. Records of Processing — maintaining an accurate, current register of what personal data an organization collects, from whom, for what purpose, where it goes, and how long it’s kept — is the backbone of demonstrable accountability. Without accurate records, an organization cannot respond accurately to regulatory inquiries, assess its own risk exposure, or even answer the basic question of whether a specific individual’s data exists in its systems. Data Subject Rights — the ability of individuals to request access to their data, request deletion, or object to specific processing — carries legal deadlines and regulatory enforcement risk when handled poorly. Retention and Deletion — the discipline of not keeping personal data longer than you need it — directly amplifies the impact of any breach that does occur, because data you shouldn’t have had becomes data that was exposed.

None of these domains generate headlines when they’re done well. But incomplete records of processing, until a regulator asks to see them, are invisible. Retention policies that aren’t enforced accumulate risk slowly, over years, in ways that produce no immediate alarm. And missed DSAR deadlines produce regulatory complaints, escalations, and fines that arrive months after the request was originally received and lost.

The hypothesis the researchers offer for this divergence is direct: organizations invest where pain is visible. Breaches are visible. Compliance failures that generate fines are visible. The grinding work of maintaining accurate records, enforcing retention schedules, and training employees to recognize informal data subject requests does not generate headlines when done right. So it gets under-resourced, and the gap quietly widens.

The Finding That Should Stop You Cold: Employees Can No Longer Identify a Data Subject Request

Within the Data Subject Rights domain decline, one finding stands apart. Employee confidence in their ability to identify a Data Subject Access Request — to recognize, in the course of their actual work, that a communication from a customer, employee, or member of the public constitutes a formal legal request triggering regulatory timelines — fell by 0.44. That is the single largest question-level decline in the entire 2025 dataset, across all 50 questions and all 12 domains.

This matters enormously for practical reasons. A DSAR that isn’t recognized isn’t escalated. A DSAR that isn’t escalated isn’t handled. A DSAR that isn’t handled within the legally required timeframe — one month under GDPR, with specific extensions requiring documented justification — is a regulatory violation. Multiply that across the volume of informal, casually phrased, and channel-diverse requests that a typical organization receives, and you have a systemic compliance gap that no policy document resolves.

The problem is straightforward to understand even if it’s difficult to fix. Early data subject requests, when GDPR first came into force, were often formal and formulaic. People copied template language directly from regulator guidance. “I wish to exercise my right of access under Article 15 of the GDPR.” An employee trained to recognize that sentence knew what they were looking at.

That’s not how requests arrive anymore. People say “Can you tell me what information you have about me?” Or they send an email that starts as a complaint and ends with a question about their records. Or they call customer service and ask verbally, mid-conversation, what data the company holds. Or they send a message on social media. Or they’re an employee in the middle of a grievance process who asks, almost as an aside, for copies of all communications that reference them.

Each of these is a DSAR. Each of them triggers the same legal clock. And according to the 2025 survey, employees across industries are becoming less confident in their ability to recognize any of them.

The decline compounds. Even when employees do recognize a potential DSAR, their confidence in reporting it immediately fell by 0.27. The researchers suggest this reflects unclear escalation pathways, anxiety about raising false alarms, or prior experiences of escalating that were frustrating enough to create hesitation. The combined effect is a double failure point: employees who can’t spot a request in the first place, and employees who spot something that might be a request but aren’t sure enough, or don’t know the process well enough, to act on it.

The practical fix isn’t more policy. It’s recognition training using real examples — actual requests phrased the way actual people phrase them, across the channels they actually use — combined with escalation pathways that are so simple and frictionless that the barrier to acting on uncertainty is essentially zero. A clearly labeled email address. A one-click form. A dedicated Teams or Slack channel. The easier it is to escalate, the more requests get escalated. The more requests get escalated, the fewer regulatory deadlines get missed.

Records of Processing: The Largest Single Decline in the Dataset, and Why It Should Worry Every Executive

The Records of Processing and Lawfulness domain — the confidence that all activities collecting and using personal data are recorded and maintained centrally — declined by 0.31 in 2025. That number is significant not just because of its magnitude, which is the largest single domain movement in either direction in this year’s data, but because of what it measures.

The underlying question is deceptively simple: do you believe that your organization knows what personal data it collects, from whom, for what purpose, and where it goes?

Confidence in the answer to that question has dropped sharply. And the researchers offer several credible explanations that should sound familiar to anyone running a business in 2025.

Modern data architecture is not the static, linear thing it was when GDPR came into force. Data does not flow from System A to Database B to Vendor C in a sequence you can document once and update quarterly. It flows through dozens of cloud services, enriched by third-party APIs, processed by analytics platforms, distributed across vendors on multiple continents, and now increasingly routed through AI tools that individual employees adopt without formal review. A spreadsheet-based record of processing activities — which is how many organizations still maintain their register — does not describe this environment. It describes the environment the organization thought it had, which stopped being accurate the moment the first unauthorized SaaS subscription was created.

Shadow AI has made this dramatically worse. When employees use generative AI tools to process work data — drafting emails, summarizing documents, analyzing customer information — without routing that activity through formal privacy review, new processing activities are created that exist nowhere in the organization’s records. The DPO doesn’t know about them. The RoPA doesn’t reflect them. And when a regulator asks for documentation of all processing activities, or when a data subject requests access to all data held about them and the organization needs to know where to look, those gaps become regulatory exposure.

The decline in RoPA confidence is not just an administrative problem. It is a governance risk that compounds over time. Incomplete records undermine an organization’s ability to respond accurately to data subject requests, to assess its own risk exposure, to conduct meaningful data mapping exercises, and to demonstrate accountability to regulators. Every new tool adopted without privacy review, every new processing activity that doesn’t make it into the register, adds another gap between the documented version of the organization and the actual one.

For executive teams, this is worth translating into concrete terms. If your organization received a regulatory request tomorrow to produce a complete and accurate record of all personal data processing activities, including every third-party system that has access to customer or employee data, could you do it? If the honest answer involves meaningful uncertainty, the RoPA decline in the 2025 survey describes exactly where you are.

Five Years of Data, Three Functional Patterns That Keep Showing Up

The five-year dataset enables the survey to do something a single-year snapshot cannot: identify patterns that persist across time, geographies, and industries. Three functional patterns have emerged consistently enough to warrant direct attention.

Legal Functions Struggle With Records of Processing

This is the finding that surprises people the most. Legal teams, across the five-year dataset, rank near the bottom of the overall awareness distribution — second only to Development — and show particularly low confidence specifically in Records of Processing and Lawfulness and in Retention and Deletion. The function that deals with privacy law most directly is the least confident that its organization’s processing records are accurate and complete.

The researchers offer a nuanced interpretation. Legal professionals may be rating themselves low not because they lack understanding of what a RoPA requires, but because they have a clearer view than anyone of what the standard actually demands and how far the organization’s current documentation falls from it. They see the edge cases. They know what regulators ask for. They understand what “complete and accurate” means under real scrutiny. And from that vantage point, what exists in many organizations looks substantially less adequate than it does to functions that have never had to defend it.

The practical implication is that a low Legal score on RoPA confidence may be the most reliable signal available that the organization’s documentation genuinely needs investment. If the people who understand the regulatory standard most deeply are least confident in the current state of compliance, that assessment is worth taking seriously.

Development Is the Weakest Function in the Organization

Development — software engineers, product teams, technical builders — is the lowest-performing function overall in the five-year dataset, with particularly severe weakness in two domains: Retention and Deletion, down 0.54, and Risk Management, also down 0.54. That dual weakness tells a story many DPOs will recognize immediately.

Development teams operate under intense pressure to ship. Features need to be delivered on time. Roadmaps are aggressive. Competitive threats are real. In that environment, privacy feels like friction — something that slows deployment, requires meetings with legal, introduces uncertainty into timelines, and doesn’t directly contribute to what the team is measured on. When privacy isn’t built into development workflows from the start, it becomes something that gets bolted on at the end, if at all. And often it doesn’t get bolted on at all.

The Retention and Deletion weakness has consequences that compound with time. If deletion mechanisms are not built into systems when those systems are designed, retroactively implementing them becomes exponentially harder. Data accumulates across databases, logs, backups, and analytics pipelines, and the question “how do we delete this person’s data?” requires months of engineering investigation to answer accurately — if it can be answered at all. Every year of data accumulation without a deletion architecture is another year of compounding exposure.

The Risk Management weakness — low confidence in DPIA completion and updating — suggests that privacy impact assessments, when they happen at all, occur late in the development process when design decisions are already locked in. A DPIA that happens the week before launch is not a risk assessment. It is a documentation exercise. The risk has already been built in.

Privacy by design, which the GDPR requires and which most organizations claim to practice, demands that privacy teams have visibility into development roadmaps early enough that architectural decisions can actually be influenced, and that privacy assessment tools feel like part of the development workflow rather than an external imposition from a function that doesn’t understand engineering. The 2025 data suggests that, across most organizations, that integration has not happened.

Customer Services Is Where Training Actually Works

Not every functional pattern is concerning. Customer Services scores above the functional average on Data Subject Rights — the domain that declined most sharply at the overall level — demonstrating that the broader decline is not inevitable and that targeted, role-specific investment produces measurably different outcomes.

The reason is visible in how Customer Services teams actually work. They receive data subject requests regularly, in their normal daily work flow. DSARs are not an abstract compliance obligation for them — they are something that arrives in their inbox on a Tuesday afternoon, and they have to deal with it. That operational frequency creates both motivation and practice. Employees who handle something repeatedly develop confidence in handling it. Employees who are trained on the specific version of a challenge they actually face in their specific role retain that training in a way that generic organization-wide modules do not produce.

The researchers frame this as evidence of a broader principle: privacy capability concentrates where operational reality aligns with training design. The most effective privacy training is not the most comprehensive. It is the most relevant — built around the actual scenarios an employee encounters in their actual role, with concrete guidance on exactly what to do in that specific situation.

Customer Services has this. Most other functions don’t. The 2025 data argues strongly for the investment required to build it.

Who Your Employees Actually Are: The Persona Framework

One of the most practically useful elements of the 2025 survey is the persona framework — a rules-based segmentation model that divides the workforce into behavioral groups based on patterns across all 50 questions. The argument for this approach is direct: an organization-wide average score can look the same for two organizations with fundamentally different risk profiles. What matters is not just the average, but the distribution.

Compliance Champions are employees who score consistently high across all domains. They understand privacy principles, feel confident applying them in their actual work, believe privacy matters, and demonstrate that through their behavior. They pause before sharing data externally. They escalate uncertainty rather than guessing. They push back when they see practices that look risky. On average across the survey population, 14% of employees fall into this category — but the range across individual organizations runs from 4% to 23%.

That variance is the point. An organization where 23% of employees are Compliance Champions has a fundamentally different privacy culture than an organization where 4% are. The 23% organization has a distributed base of capability — people across functions who can act as informal resources, reinforce training, and serve as a federated network of privacy awareness. The 4% organization has essentially isolated its privacy expertise in the DPO’s office, and every employee outside that office is operating largely without the benefit of peer reinforcement or accessible expertise.

Privacy Beginners score consistently lower but, critically, are aware of their own gaps. They recognize they don’t fully understand privacy obligations and would benefit from support. They are not overconfident, which makes them a more addressable population than employees who don’t know what they don’t know. On average, 15% of employees are Beginners, ranging from 6% to 26% across organizations. A 26% Beginner population is not necessarily alarming — it may indicate healthy self-awareness combined with rapid growth that has outpaced onboarding. But it does indicate a significant population that needs structured support rather than assuming the training they’ve completed has landed.

Security Risks are the segment that demands the most immediate attention. These are employees who score low enough on security-related questions to represent material exposure — employees who may not understand basic data protection practices, who don’t recognize common threats, or who exhibit behaviors that create real risk. On average, 2% of employees qualify. But in some organizations, that figure reaches 9%. Nearly one in ten employees representing active security risk is not a statistical footnote. It is a priority intervention.

The power of the persona framework is its precision. An organization that knows it has a 9% Security Risk population concentrated in its Development and Customer Services functions can do something targeted and effective about it. An organization that knows only that its overall culture score declined by 0.1 does not know where to look.

The Shadow AI Problem: How New Technology Is Undermining Foundational Compliance

Running through the 2025 findings like a thread is the issue of shadow AI — employees using generative AI tools to process work data without those tools having been reviewed, approved, or documented by the privacy team. The survey does not treat this as a future concern. It treats it as a current driver of several of the declines already documented.

The connection to Records of Processing is direct and significant. When an employee copies customer data into an external AI tool to analyze sentiment, or pastes employee records into a generative model to draft a performance review, that activity creates a processing activity that exists nowhere in the organization’s RoPA. It involves a processor — the AI platform — that has not been subject to vendor due diligence. It may involve cross-border data transfers. It may involve retention by the AI platform that is inconsistent with the organization’s own retention policies. And it happened because the tool was useful, it was free or cheap, and nobody asked the right questions before using it.

The sharp decline in RoPA confidence — the largest single movement in the 2025 dataset — may be, at least in part, the aggregate signal of thousands of employees who have started to understand that the tools their organization officially documents are not the same as the tools their organization actually uses. That gap between the documented and the actual is exactly where Shadow AI lives.

The Risk Management decline in Development tells a parallel story. Development teams are often the first to adopt new AI tools — AI coding assistants, AI-powered testing frameworks, AI-assisted data analysis. If those tools are not subject to privacy impact assessment before adoption, and the 2025 data suggests they frequently are not, then the organization has no documented analysis of whether the processing they perform is lawful, proportionate, and consistent with the purposes for which the underlying data was collected.

Privacy by design, in the context of AI adoption, means asking the privacy questions before the tool is deployed, not after employees have been using it for six months. The 2025 survey suggests that process is not working in most organizations.

What This Means for Your Business Right Now

The Global Privacy Culture Survey is published by a UK-based research firm and its findings draw heavily on GDPR-framework organizations. But the dynamics it describes — the gap between documented compliance and lived compliance, the decline in foundational governance practices, the inability of employees to recognize legal obligations when they arrive informally, the compounding risk of undocumented data processing — are not jurisdiction-specific.

If your business operates under CCPA or CPRA in California, the same employee who doesn’t recognize a DSAR in a GDPR context won’t recognize a consumer rights request under California law, which carries its own deadlines and enforcement mechanisms. If your business operates under HIPAA, the shadow AI problem describes exactly the scenario where an employee processes patient-related information through an external tool that your BAA program doesn’t cover. If your business collects customer behavioral data for analytics or personalization — and nearly every business with a website does — the RoPA decline describes your exposure to the growing wave of CIPA wiretapping litigation and CCPA class actions, where the gap between documented data collection and actual data collection is often the crux of the legal theory.

The five specific recommendations the 2025 survey offers are worth internalizing directly.

Close the enablement gap, not just the knowledge gap. More training modules are not the answer if the problem is that employees feel unable to act on what they know. Simplify escalation. Provide better tools. Make it visibly easy to do the right thing.

Treat Records of Processing as a governance priority, not an administrative task. A spreadsheet-based RoPA that hasn’t been updated since your last vendor review is not a functioning compliance asset. Automated discovery tools, integrated RoPA workflows, and business-unit-level accountability for documentation are the standard that modern data architecture requires.

Train employees to recognize requests, not just to understand rights. The distinction matters enormously in practice. Employees who know individuals have the right to access their data will not necessarily recognize the informal email that constitutes such a request. Case-based training with real examples across real channels is what builds recognition capability.

Embed privacy into Development workflows before systems are built. The DPIA that happens after launch is documentation, not risk management. Privacy teams need visibility into development roadmaps early enough that architecture decisions can still be influenced.

Use persona data to target interventions precisely. A 9% Security Risk population concentrated in two departments is a fundamentally different problem than a 2% population distributed evenly. Precision is not a luxury in privacy culture investment — it is the difference between programs that produce measurable change and programs that produce completion certificates.

The Honest Question Every Executive Should Ask

The Global Privacy Culture Survey 2025 is, at its core, an evidence-based argument for treating privacy compliance as an operational discipline rather than a documentation exercise. Five years of data across thousands of employees in multiple countries and industries has produced a consistent finding: the organizations that have invested in making privacy easy to practice — not just in making compliance easy to document — are the ones where capability is actually embedded.

The rest are running a risk that grows quieter and larger every time an employee adopts a new tool without privacy review, every time a request goes unrecognized because it was phrased informally, and every time a processing activity happens that no one has recorded.

The question for any business owner or executive reading this is not whether your organization has a privacy policy. It is whether your employees, in the actual moments that determine privacy outcomes, feel equipped to do the right thing and know exactly what to do next.

According to the most comprehensive study of its kind in the world, for most organizations in 2025, the honest answer is no.

Your Privacy Program May Be More Exposed Than Your Last Audit Suggested

The “I care, but I can’t cope” dynamic the 2025 Global Privacy Culture Survey describes is not an abstract culture problem. It is the gap between what your compliance documentation says and what your employees actually do when an informal request arrives, when a developer pushes a feature without a privacy review, or when someone on your team starts using an AI tool that nobody cleared.

That gap is where CCPA class actions are filed. It is where CIPA wiretapping claims originate. It is where regulators find the violations that generate penalties. And it is almost always invisible until it isn’t.

We work with businesses to make that gap visible — and close it — before someone else does it for you. Whether you need a privacy audit, help building a realistic compliance program, or a clear picture of where your current exposure sits, the conversation starts free.