| IN-DEPTH ANALYTICAL REPORT

U.S. STATE ARTIFICIAL INTELLIGENCE GOVERNANCE LAWS California • Colorado • Utah • Texas Obligations, Compliance Strategy, Cross-State Conflicts & Enforcement February 2026 | For Legal & Compliance Professionals |

| EXECUTIVE SUMMARY

Overview of the U.S. State AI Regulatory Landscape |

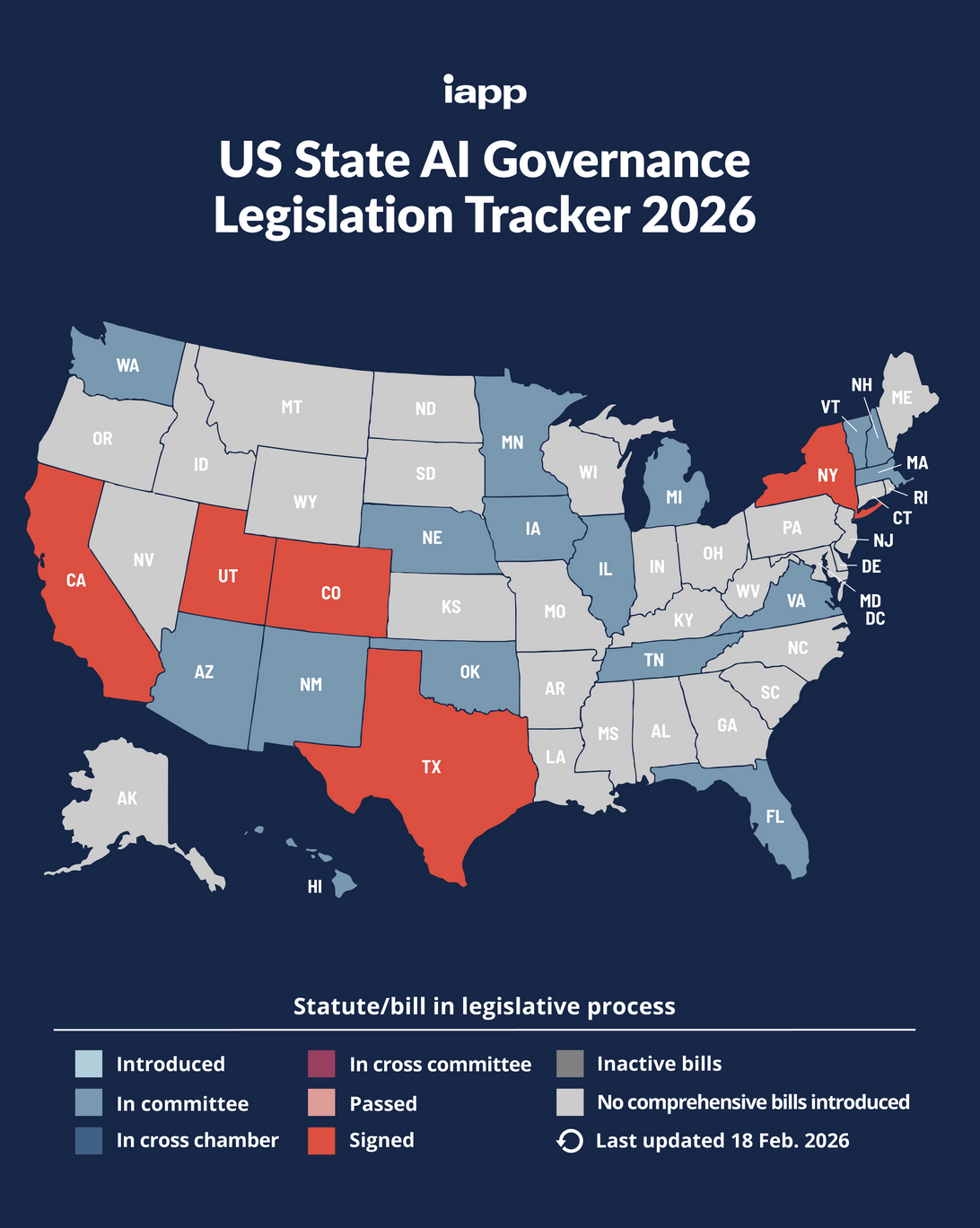

The United States is witnessing an unprecedented wave of state-level artificial intelligence legislation. In the absence of comprehensive federal AI governance, individual states have moved swiftly to establish legal frameworks governing the development, deployment, and use of AI systems. This report provides a detailed analytical examination of seven AI governance laws currently in effect or imminently effective across four states: California, Colorado, Utah, and Texas.

Collectively, these laws represent a diverse range of regulatory philosophies — from California’s layered transparency and frontier-model safety approach, to Colorado’s comprehensive risk-based framework modeled in part on the EU AI Act, to Utah’s streamlined disclosure regime, and Texas’s intent-based prohibited-practice prohibitions. Together, they form a rapidly expanding and operationally demanding compliance patchwork for any organization that develops or deploys AI systems in the United States.

Key findings of this report include:

- Seven discrete AI laws across four states impose immediate or near-term compliance obligations on private-sector organizations, with effective dates spanning 2024 through mid-2026.

- No private right of action exists under any of the examined laws; enforcement rests exclusively with state Attorneys General and designated regulatory agencies, but penalty exposure ranges up to $1,000,000 per violation under California’s SB 53.

- Colorado’s AI Act imposes the most structurally rigorous requirements, demanding impact assessments, risk management programs, and consumer appeal mechanisms. Its effective date has been delayed to June 30, 2026, and further amendments are anticipated.

- Significant definitional inconsistencies across statutes — particularly around “high-risk” AI, “deployer,” and “consecquential decision” — create material compliance ambiguity for multi-state operators.

- Texas takes a markedly different approach, focusing on prohibited AI intent rather than risk-based process obligations, preempting local regulations, and creating a 36-month regulatory sandbox for testing.

- Safe harbor provisions and NIST AI RMF alignment offer meaningful affirmative defenses in Colorado and Texas; organizations should prioritize framework adoption as a baseline risk-mitigation strategy.

| COMPLIANCE TIMELINE

Key Effective Dates Across All Laws |

The table below summarizes critical effective dates. Note that SB 942 (California) and the Colorado AI Act have both experienced legislative delays from their original dates; practitioners should track further amendments given ongoing rulemaking activity in both states.

| Effective Date | Event | State |

| May 1, 2024 | Utah AI Policy Act (SB 149) takes effect | Utah |

| Jan 1, 2026 | California AB 2013 (Training Data Transparency) takes effect | California |

| Jan 1, 2026 | California SB 53 (Frontier AI / TFAIA) takes effect | California |

| Jan 1, 2026 | Texas TRAIGA takes effect | Texas |

| May 7, 2025 | Utah SB 226 & SB 332 amendments take effect | Utah |

| Aug 2, 2026 | California SB 942 (AI Transparency Act) delayed effective date | California |

| Jun 30, 2026 | Colorado AI Act (SB 205) delayed effective date | Colorado |

| CRITICAL NOTE — Colorado Delay

Colorado’s AI Act was originally effective February 1, 2026. Following a special legislative session in August 2025, Governor Polis signed SB 25B-004 delaying implementation to June 30, 2026. Additional amendments are anticipated during the 2026 regular legislative session. Compliance teams should monitor developments closely, as core definitional and scope provisions remain in flux. |

| CALIFORNIA

AB 2013 • SB 942 • SB 53 |

California has established itself as the most prolific state AI regulator in the nation, enacting three substantive private-sector AI governance laws effective in 2026, each targeting a different dimension of AI development and use. Read together, California’s AI laws form a layered compliance system covering training data transparency (AB 2013), AI-generated content provenance (SB 942), and frontier model safety governance (SB 53).

1.1 Assembly Bill 2013 — AI Training Data Transparency

| Official Title | AI Training Data Transparency Act |

| Bill Number | AB 2013 (2024 Legislative Session) |

| Effective Date | January 1, 2026 |

| Regulates | Developers of publicly available generative AI systems |

| Scope | All generative AI systems available to California residents, released or substantially modified on or after January 1, 2022 |

| Enforcer | California Attorney General |

| Penalty | No explicit civil penalty; enforcement under CA consumer protection framework |

Core Obligations

AB 2013 establishes a broad public disclosure mandate centered on training data. From January 1, 2026, any developer of a generative AI system that is made available to California residents must publicly post on its website a “high-level summary” of the datasets used to train the system. The statute does not define “high-level,” leaving significant interpretive latitude — a source of both flexibility and legal uncertainty.

Who Is Covered

A “developer” under AB 2013 includes any person that designs, codes, produces, or substantially modifies a generative AI system for public use. Notably, there is no minimum size or revenue threshold, meaning the law applies equally to startups and global enterprises. The law’s backward-looking scope captures all qualifying systems released or substantially modified after January 1, 2022.

“Substantially modifies” is defined as an update that materially changes an AI system’s functionality or performance, including through retraining or fine-tuning. Organizations that regularly update or fine-tune models must therefore maintain ongoing documentation and disclosure practices, not merely a one-time disclosure at launch.

Required Disclosure Elements

The high-level training data summary must include, at minimum:

- A description of the datasets used, including their sources, general subject matter, and approximate volume

- Whether training data includes personal information as defined under the California Consumer Privacy Act (CCPA)

- Intellectual property status of training data, including use of copyrighted, trademarked, patented, or public domain materials, with licensing details where applicable

- Whether any AI-generated (synthetic) data was used as part of training or development

- Whether training data includes aggregate consumer information

Practical Compliance Challenges

AB 2013 presents several operational difficulties. The absence of a defined “high-level” standard means organizations must exercise judgment as to the level of granularity required. Furthermore, the law contains no trade secret carve-out, which creates tension with organizations that regard training data provenance as competitively sensitive. Until the Attorney General provides interpretive guidance, a conservative approach — disclosing sufficient detail to be meaningful without exposing proprietary specifics — appears prudent.

1.2 Senate Bill 942 — California AI Transparency Act

| Official Title | California AI Transparency Act (as amended by AB 853) |

| Bill Number | SB 942 (2024); amended by AB 853 (2025) |

| Effective Date | August 2, 2026 (delayed from original January 1, 2026 by AB 853) |

| Regulates | Covered providers: developers of generative AI systems with over 1 million monthly users/visitors |

| Scope | Generative AI systems capable of producing derived synthetic image, video, or audio content |

| Penalty | $5,000 per violation; enforced by CA AG or local authorities |

| Private Action | No private right of action |

Core Obligations

SB 942 (as modified by AB 853) establishes a content provenance framework designed to help consumers identify when audiovisual content was created or altered by AI. The law focuses specifically on image, video, and audio content — not text — and requires large covered providers to implement both detection and disclosure tools.

Detection Tool Requirement

Covered providers must offer a free AI detection tool that enables users to assess whether an image, video, or audio file was created or substantially altered by the provider’s AI system. This tool must be “clear and conspicuous.”

Labeling Requirement

Covered providers must also give users the option to add a visible label to AI-generated images, videos, or audio. The label must be clear and appropriate to the content format — effectively a user-facing disclosure mechanism for AI-generated media.

Latent / Provenance Metadata

AB 853 further introduces requirements for latent labeling — embedding invisible provenance metadata into AI-generated content that allows detection tools (including third-party tools) to identify AI origin. This mechanism aligns with emerging global standards such as the C2PA (Coalition for Content Provenance and Authenticity) framework.

Expanded Scope Under AB 853

AB 853 expands SB 942’s obligations beyond AI developers to also cover: (1) generative AI hosting platforms — websites or apps that make available AI model source code or weights for download by California residents; and (2) large online platforms with over two million monthly users; and (3) capture device manufacturers producing recording devices sold in California. Starting January 1, 2027, these expanded requirements take full effect.

| COMPLIANCE NOTE — SB 942 Delay

Although the original effective date was January 1, 2026, AB 853 delayed enforcement to August 2, 2026. Organizations that were preparing for January 1 compliance retain that work but gain additional runway for technical implementation of latent labeling and detection tooling. The expanded 2027 provisions require advance planning for platform operators and hardware manufacturers. |

1.3 Senate Bill 53 — Transparency in Frontier Artificial Intelligence Act (TFAIA)

| Official Title | Transparency in Frontier Artificial Intelligence Act (TFAIA) |

| Bill Number | SB 53 (2025) |

| Effective Date | January 1, 2026 |

| Regulates | Frontier developers: entities that have trained or initiated training of a frontier AI model |

| Scope Threshold | Foundation models trained on >10²⁶ FLOPs (integer or floating-point operations, including fine-tuning) |

| Large Developer | Frontier developers with annual gross revenues (incl. affiliates) exceeding $500 million |

| Enforcer | California Attorney General |

| Penalty | Up to $1,000,000 per violation |

| Private Action | No private right of action |

Core Obligations

SB 53 represents California’s most significant AI regulation to date, establishing the nation’s first comprehensive legal framework targeting developers of frontier AI models. The law creates a tiered set of obligations, with heightened duties for “large frontier developers” (those with revenues exceeding $500 million per year).

Frontier AI Framework (All Frontier Developers)

Every frontier developer must publish and maintain on its website an enterprise-wide Frontier AI Framework. This document must describe, at minimum:

- The company’s approach to identifying, assessing, and mitigating catastrophic risks

- How the company incorporates national and international AI safety standards (e.g., NIST AI RMF, ISO/IEC 42001)

- Protocols for securing unreleased model weights

- Governance structures for internal use of frontier models

Transparency Reports (All Frontier Developers)

Before deploying any new frontier model or substantial modification to an existing one, developers must release detailed public transparency reports that include:

- Model capabilities and intended uses

- Risk assessment results, including results of third-party evaluations where conducted

- Mitigation measures implemented prior to deployment

- Known limitations and failure modes

Regulatory Reporting (Large Frontier Developers Only)

Large frontier developers (>$500M annual revenue) face enhanced reporting requirements:

- Submission of rolling summaries of internal catastrophic-risk assessments to the California Office of Emergency Services (OES)

- Mandatory reporting of any “critical safety incidents” to OES within prescribed timeframes (specific deadlines to be established by regulation)

Whistleblower Protections

SB 53 introduces explicit whistleblower protections for employees who report safety concerns related to frontier AI systems. Organizations must not retaliate against employees who raise concerns about catastrophic risk or report safety incidents to regulators.

| KEY ENFORCEMENT RISK — SB 53 Penalties

With penalties of up to $1,000,000 per violation and no private right of action, SB 53’s enforcement risk is concentrated in the California Attorney General’s office. Organizations subject to the law should ensure their Frontier AI Frameworks are published before deployment of any qualifying model and that transparency reports are current at all times. |

| COLORADO

Colorado Artificial Intelligence Act (SB 24-205) |

Colorado’s Artificial Intelligence Act (CAIA), formally titled “Consumer Protections for Artificial Intelligence,” was signed into law on May 17, 2024, and represents the most structurally comprehensive U.S. state AI law to date. Originally effective February 1, 2026, its implementation was delayed to June 30, 2026 following a special legislative session. The law establishes a risk-based framework closely modeled on the EU AI Act and imposes dual obligations on both developers and deployers of “high-risk” AI systems.

2.1 Scope and Definitions

| Official Title | Consumer Protections for Artificial Intelligence |

| Bill Number | SB 24-205 (2024) |

| Effective Date | June 30, 2026 (delayed from February 1, 2026 by SB 25B-004) |

| Regulates | Developers and deployers of high-risk AI systems in Colorado |

| Enforcer | Colorado Attorney General (exclusive authority) |

| Penalty | Unfair trade practice under CO Consumer Protection Act (CPA) |

| Private Action | No private right of action |

| Cure Period | 60 days upon AG notice |

High-Risk AI System Definition

The CAIA applies to any AI system that makes, or is a substantial factor in making, a “consequential decision” concerning a consumer. A consequential decision is one that has a material legal or significantly similar effect on the provision, denial, cost, or terms of:

- Education enrollment or opportunities

- Employment or employment opportunities

- Financial or lending services

- Essential government services

- Healthcare services

- Housing

- Insurance

- Legal services

Critically, the CAIA expressly excludes AI systems that merely provide informational responses (e.g., customer-service chatbots operating under acceptable-use policies that prohibit discriminatory content), as well as antivirus software, spam filters, and other purely technical tools.

Exemptions

The CAIA provides several notable carve-outs. HIPAA-covered entities are exempt for healthcare AI systems that require clinician action to implement a recommendation and are not independently high-risk. Small businesses employing fewer than 50 full-time employees that do not train their own systems are also exempt. Systems approved or certified by federal agencies (FDA, FAA, etc.) are similarly excluded where those federal standards are equivalent or stricter.

2.2 Developer Obligations

Developers bear proactive disclosure and documentation obligations aimed at enabling deployers to implement responsible governance.

- Provide deployers with documentation sufficient to complete an impact assessment, including: general statements on foreseeable uses and risks; data summaries detailing training data and limitations; purpose and output explanations; risk mitigation measures; and any model cards, dataset cards, or equivalent artifacts

- Publish, on the developer’s website or in a public use-case inventory, a summary of all high-risk AI systems offered, including how risks of algorithmic discrimination are managed

- Disclose to the Attorney General and all known deployers any known or reasonably foreseeable risks of algorithmic discrimination within 90 days of discovery or credible report

Importantly, developers are not required to disclose trade secrets, information protected by state or federal law, or information that would create a security risk to the developer.

2.3 Deployer Obligations

Deployers bear the most operationally intensive obligations under the CAIA, as they are the entities making or influencing consequential decisions affecting consumers.

Risk Management Program

Deployers must implement a documented risk management policy and program that specifies the principles, processes, and personnel used to identify, document, and mitigate known or reasonably foreseeable risks of algorithmic discrimination. This program must be implemented prior to any deployment of a high-risk AI system.

Annual Impact Assessments

Deployers must complete an impact assessment before deployment and annually thereafter. The assessment must address: the purpose, intended benefits, and deployment context of the system; reasonably foreseeable risks of algorithmic discrimination; data governance practices; human oversight mechanisms; and post-deployment performance monitoring procedures. All assessments must be retained for at least three years after the final deployment of the system.

Consumer Disclosures

Before a consequential decision is made, deployers must notify the consumer that a high-risk AI system has been used and provide a disclosure stating: the purpose of the AI system; a plain-language description of the system; the deployer’s contact information; and instructions for exercising consumer rights.

Adverse Decision Disclosure

When a high-risk AI system results in an adverse decision, deployers must additionally disclose: the principal reason(s) for the adverse decision; and information about the consumer’s right to appeal or request human review.

Human Review and Appeal Rights

Deployers must provide consumers affected by adverse consequential decisions a meaningful opportunity to appeal, including access to human review where technically feasible. This represents one of the most operationally challenging provisions of the CAIA for organizations relying on automated pipelines.

Public Statements

Both developers and deployers must publish on their websites a public statement summarizing the types of high-risk AI systems they develop or deploy, and how they manage algorithmic discrimination risks.

2.4 Enforcement and Affirmative Defenses

The Attorney General holds exclusive enforcement authority, with no private cause of action. Violations constitute unfair trade practices under the Colorado Consumer Protection Act (CPA), which can carry significant financial consequences. Before initiating any enforcement action, the AG must provide notice; organizations then have 60 days to cure identified deficiencies. If cured, enforcement may be avoided.

A critical affirmative defense is available: an organization that is in compliance with a nationally or internationally recognized AI risk management framework — specifically the NIST AI RMF or ISO/IEC 42001 — and has followed the AG’s designating rules, may rebut allegations of non-compliance. This provision strongly incentivizes adoption of recognized governance frameworks as a baseline compliance strategy.

| STRATEGIC RECOMMENDATION — Colorado

Given the Colorado AI Act’s ongoing evolution and anticipated 2026 legislative session amendments, compliance teams should implement a modular governance architecture capable of absorbing definition changes without rebuilding entire programs. The NIST AI RMF affirmative defense creates a compelling business case for enterprise-wide framework adoption now, before enforcement begins. |

| UTAH

AI Policy Act (SB 149) • SB 226 Amendments |

Utah was among the first U.S. states to enact AI-specific legislation targeting consumer protection, with the Utah Artificial Intelligence Policy Act (UAIPA or AIPA) taking effect May 1, 2024. The state’s approach centers on disclosure obligations for generative AI in consumer and regulated professional contexts. In 2025, Utah enacted SB 226 to amend and narrow the original law’s scope, and SB 332 to extend its sunset date to July 1, 2027.

3.1 Utah Artificial Intelligence Policy Act (Original, SB 149)

| Official Title | Utah Artificial Intelligence Policy Act (UAIPA) |

| Bill Number | SB 149 (2024) |

| Effective Date | May 1, 2024 |

| Sunset Date | July 1, 2027 (extended by SB 332, 2025) |

| Regulates | Entities using generative AI in consumer transactions or regulated professional services |

| Enforcer | Utah Division of Consumer Protection; Utah Attorney General |

| Penalty | $2,500 per violation (admin); $5,000 per violation of court/admin order |

| Private Action | No direct private right of action under the Act |

Core Disclosure Obligations

The original UAIPA requires entities to provide a clear and conspicuous disclosure that a consumer is interacting with generative AI rather than a human when:

- The consumer asks whether they are interacting with AI; or

- The entity is operating in a “regulated occupation” — any occupation requiring a state license or certification — and is delivering regulated services

For regulated occupations, the disclosure must be proactive (given at the beginning of the interaction) and delivered in the modality of the interaction: orally for voice interactions, in writing for text-based exchanges.

What Constitutes “Generative AI” Under Utah Law

Utah defines generative AI as a system that: (a) is trained on data; (b) interacts with a person in Utah; and (c) generates outputs similar to outputs created by a human. This functional, behavior-based definition is notably narrower than some jurisdictions’ definitions, and excludes purely scripted or deterministic systems.

3.2 Senate Bill 226 — AI Consumer Protection Amendments

| Official Title | Artificial Intelligence Consumer Protection Amendments |

| Bill Number | SB 226 (2025 General Session) |

| Effective Date | May 7, 2025 |

| Effect | Narrows and clarifies UAIPA disclosure obligations; adds safe harbor; grants rulemaking authority to Division of Consumer Protection |

Narrowing of Disclosure Triggers

SB 226 makes three significant changes to the UAIPA’s disclosure regime:

- Consumer-request trigger: The requirement to disclose AI interaction when asked by the consumer now applies only when the consumer makes a “clear and unambiguous request” to determine whether they are interacting with a human or AI. Ambiguous or general questions do not trigger the obligation.

- Regulated occupation scope: Proactive disclosure for regulated occupations is now limited to “high-risk” interactions — defined as those involving the collection of sensitive personal information (health data, financial data, biometric data) or the provision of personalized advice that a consumer could reasonably rely upon for significant personal decisions (e.g., financial, legal, medical, or mental health advice).

- Safe harbor: Entities are not subject to enforcement if the generative AI system itself makes a clear and conspicuous disclosure, at the outset of and throughout the interaction, that it is a non-human AI. This safe harbor incentivizes building disclosure directly into AI interfaces rather than relying on operator-level compliance processes.

Enforcement and Penalties

The Division of Consumer Protection may impose administrative fines of up to $2,500 per violation. Courts or the Utah AG may impose civil penalties of up to $5,000 per violation of a court or administrative order. SB 226 further clarifies that violations are subject to injunctive relief, disgorgement of profits, and payment of the Division’s attorney fees and costs — making even individually small violations potentially significant in aggregate.

| PRACTICAL NOTE — Utah Safe Harbor

Utah’s SB 226 safe harbor is the most practically actionable provision in the state’s AI framework: by programming AI interfaces to self-identify at the start and throughout consumer interactions, organizations can substantially reduce enforcement exposure. This design-level compliance solution is preferable to relying on operator-level disclosure processes that may not be consistently implemented. |

| TEXAS

Texas Responsible Artificial Intelligence Governance Act (TRAIGA) |

Texas enacted TRAIGA on June 22, 2025, making it the second state after Colorado to pass broad AI governance legislation applicable to private-sector entities. However, TRAIGA’s approach diverges sharply from Colorado’s risk-based framework. Rather than imposing proactive risk management and impact assessment obligations across AI use cases, TRAIGA focuses on specific, categorical prohibitions on harmful AI practices and relies on intent-based liability rather than impact-based liability.

TRAIGA applies to any person or entity that does business in Texas, offers products or services to Texas residents, or develops or deploys AI systems within the state. This extraterritorial reach means that out-of-state organizations serving Texas residents must comply.

| Official Title | Texas Responsible Artificial Intelligence Governance Act |

| Bill Number | HB 149 (89th Legislature, 2025) |

| Signed | June 22, 2025 |

| Effective Date | January 1, 2026 |

| Regulates | Any person or entity conducting business in Texas or with Texas residents; developers and deployers of AI systems |

| Enforcer | Texas Attorney General (exclusive authority) |

| Penalties | Curable: $10,000–$12,000; Uncurable: $80,000–$200,000; Continuing: $2,000–$40,000/day |

| Private Action | No private right of action; AG complaint portal required |

| Cure Period | 60 days upon AG notice of violation |

| Local Preemption | Yes — expressly preempts city and county AI ordinances |

4.1 Prohibited AI Practices

TRAIGA’s primary mechanism for private-sector governance is a set of categorical prohibitions applicable to developers and deployers. Critically, virtually all prohibitions contain an intent requirement — liability attaches only where a party “intentionally” develops or deploys a system for a prohibited purpose. This is a deliberate departure from Colorado’s impact-based framework.

Behavioral Manipulation

Entities may not develop or deploy AI systems intentionally designed to manipulate human behavior so as to incite or encourage self-harm, harm to others, or criminal activity. This prohibition targets exploit-oriented AI design rather than unintended harmful outputs.

Unlawful Discrimination

TRAIGA prohibits development or deployment of AI systems with the intent to unlawfully discriminate against a protected class. Importantly, TRAIGA requires proof of discriminatory intent — disparate impact alone does not establish a violation. This is significantly more protective of developers than Colorado’s approach, which does not require intent. Financial institutions and insurance entities are granted additional carve-outs.

Constitutional Rights

No AI system may be developed or deployed with the sole intent to infringe, restrict, or impair rights guaranteed by the U.S. Constitution or Texas Constitution. While broad in scope, the “sole intent” standard effectively limits this provision to deliberate, targeted rights violations.

Child Exploitation and Unlawful Deepfakes

TRAIGA prohibits the development or distribution of AI systems intended to: produce or disseminate child sexual abuse material (CSAM); create or distribute unlawful sexually explicit deepfake content; or engage in text-based conversations that simulate sexual content while impersonating a minor.

Biometric Data (Government Entities Only)

Government agencies are prohibited from using AI to uniquely identify individuals through biometric data (fingerprints, voiceprints, iris scans) without informed consent, except in limited circumstances including security, fraud prevention, and law enforcement.

Social Scoring (Government Entities Only)

Following a provision similar to Article 5 of the EU AI Act, TRAIGA prohibits governmental entities from deploying AI systems that evaluate individuals based on social behavior or personal characteristics to assign a “social score” that results in disproportionate or unjustified adverse treatment or the infringement of constitutional rights.

4.2 Government Agency Disclosure Obligations

State agencies and healthcare service providers have specific transparency obligations that, while primarily government-facing, affect private-sector organizations contracting with the state or providing AI-enabled services to Texas government:

- State agencies must provide clear and conspicuous notice to individuals before or at the time they interact with an AI system, regardless of whether the AI interaction would be obvious to a reasonable consumer

- Healthcare providers must disclose AI use to patients or representatives before or at the time of AI-assisted service delivery, with a limited exception for emergencies (disclosure must occur as soon as reasonably possible)

4.3 Regulatory Sandbox and Texas AI Advisory Council

TRAIGA creates two forward-looking institutional mechanisms:

36-Month Regulatory Sandbox

Businesses approved for participation may test innovative AI systems for up to 36 months within a controlled environment, free from the requirement to obtain licenses or other regulatory authorizations otherwise required to operate in Texas. In exchange, participants must share information about their AI systems with the state. This mechanism provides meaningful protection for novel use cases and can be particularly valuable for early-stage companies or organizations piloting disruptive AI applications.

Texas Artificial Intelligence Advisory Council

TRAIGA establishes an AI Advisory Council tasked with: identifying existing laws and regulations that impede AI innovation; analyzing opportunities for the state to improve operations through AI; and providing guidance on ethical AI development. The Council may issue reports to the Legislature and conduct training for state agencies, but may not adopt binding rules or override agency operations.

4.4 Enforcement Framework

The Texas Attorney General holds exclusive enforcement authority. Consumers may submit complaints through an AG-maintained online portal. Upon receiving a complaint, the AG may issue civil investigative demands requesting extensive information, including a high-level description of the AI system’s purpose; training data descriptions; input/output descriptions; performance metrics; and post-deployment monitoring practices.

Upon notice of a violation, the violating party has 60 days to cure. Penalties structure:

- Curable violations (not cured): $10,000–$12,000 per violation

- Violations where a false cure statement is submitted to the AG: $10,000–$12,000 per violation

- Uncurable violations: $80,000–$200,000 per violation

- Continuing violations (persisting beyond the cure period without submission of a cure statement): $2,000–$40,000 per day

- Licensed professionals: additional fines up to $100,000 and potential license suspension or revocation upon AG recommendation

Safe Harbors and Affirmative Defenses

Organizations that can demonstrate substantial compliance with the NIST AI Risk Management Framework or a similarly recognized framework have an affirmative defense against TRAIGA enforcement actions. Additionally, liability does not attach for unintended third-party misuse of AI systems, provided the developer or deployer did not intentionally design for or enable the prohibited use.

| TRAIGA’s Intent Standard — Risk and Documentation Implications

TRAIGA’s intent-based standard offers broader protections than impact-based frameworks, but creates a documentation imperative: organizations must maintain detailed records of AI system purposes, design decisions, testing protocols, and intended use cases to credibly demonstrate the absence of prohibited intent in enforcement proceedings. The lack of formal documentation could make it difficult to rebut AG allegations even where no actual intent existed. |

| CROSS-STATE ANALYSIS

Comparisons, Conflicts & Definitional Divergences |

5.1 Requirements Matrix

The following matrix maps the key compliance requirements across all seven laws examined in this report. A checkmark indicates that the requirement exists in the law; a dash indicates the requirement is absent or inapplicable. Note that comparable requirements may differ significantly in scope, threshold, and detail.

* SB 942 is delayed to August 2, 2026.

| Requirement | CA AB 2013 | CA SB 942* | CA SB 53 | CO AI Act | UT AIPA | UT SB 226 | TX TRAIGA |

| Training Data Disclosure | ✓ | — | — | ✓ | — | — | — |

| AI Content Detection Tool | — | ✓ | — | — | — | — | — |

| AI Watermarking / Provenance | — | ✓ | — | — | — | — | — |

| Frontier Safety Framework | — | — | ✓ | — | — | — | — |

| Incident Reporting | — | — | ✓ | ✓ | — | — | — |

| Risk Management Policy | — | — | — | ✓ | — | — | — |

| Impact Assessments | — | — | — | ✓ | — | — | — |

| Consumer AI Disclosure | — | — | — | ✓ | ✓ | ✓ | — |

| High-Risk AI Classification | — | — | — | ✓ | — | ✓ | — |

| Prohibited AI Practices | — | — | — | — | — | — | ✓ |

| Regulatory Sandbox | — | — | — | — | — | — | ✓ |

| Appeal / Human Review Right | — | — | — | ✓ | — | — | — |

| Private Right of Action | — | — | — | — | — | — | — |

| AG Enforcement | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

5.2 Definitional Conflicts and Compliance Complexity

The most significant practical challenge for multi-state organizations is the inconsistency of core definitional terms across state laws. Organizations with AI systems operating across California, Colorado, Utah, and Texas face material compliance complexity arising from the following key divergences:

“High-Risk” AI

Colorado defines high-risk AI functionally, based on whether the system is a substantial factor in “consequential decisions” in eight enumerated domains. Utah’s SB 226 defines “high-risk” narrowly, limited to certain professional interactions involving sensitive data or significant personal decisions. Texas does not use a risk-tier framework at all — instead prohibiting specific uses regardless of risk level. California’s AB 2013 and SB 53 use no high-risk threshold; SB 942 uses a minimum-user threshold (1 million monthly users) instead of a risk characterization.

“Deployer” vs. “Developer”

Colorado distinguishes carefully between developers (entities that create or substantially modify high-risk AI systems) and deployers (entities that use those systems to make consequential decisions), imposing distinct obligations on each. Texas uses both terms but concentrates obligations on the prohibited-practice prohibitions applicable to both equally. California’s AB 2013 focuses exclusively on developers; SB 53 focuses on frontier developers; SB 942 focuses on “covered providers” (by user volume). Utah does not use the developer/deployer distinction.

“Algorithmic Discrimination” vs. “Discriminatory Intent”

Colorado’s CAIA prohibits “algorithmic discrimination” — unlawful differential treatment or disparate impact based on protected characteristics. There is no intent requirement; an impact-only showing suffices. Texas’s TRAIGA, by contrast, requires proof of intent to discriminate unlawfully. The gap between these standards is legally significant: a company in Colorado could face liability for a neutral AI system that produces discriminatory outcomes, while in Texas the same system would likely not trigger TRAIGA liability absent evidence of discriminatory purpose.

Geographic Scope

All four states assert extraterritorial reach in some form. Colorado applies to developers and deployers of high-risk AI systems operating in Colorado. California’s laws generally apply to systems made available to California residents. Utah’s laws apply to systems interacting with persons in Utah. Texas applies to any entity conducting business in Texas or with Texas residents. Organizations not physically located in these states but serving customers there must assess and map their compliance obligations accordingly.

5.3 Potential Federal Preemption

A significant uncertainty for all state AI laws is the prospect of federal preemption. A proposed federal measure being considered by Congress would impose a ten-year moratorium on state and local AI regulations, unless designed to accelerate AI deployment. If enacted, this legislation could suspend TRAIGA, the Colorado AI Act, and other state laws.

Compliance professionals should: (a) monitor federal AI legislation closely; (b) design state-law compliance programs with modular architecture capable of being adjusted or suspended; and (c) avoid over-investing in state-law-specific governance structures that may need to be dismantled if federal law preempts state action.

| ENFORCEMENT & PENALTIES

Penalty Exposure Across All Examined Laws |

The following table consolidates enforcement mechanisms and penalty exposure across all examined laws. Compliance professionals should note that no examined law provides a private right of action: enforcement is exclusively AG-driven, meaning penalty risk is concentrated in prosecutorial prioritization. However, AG offices in California and Texas have each demonstrated AI as an active enforcement priority.

| Jurisdiction / Law | Max Penalty | Private Right of Action | Enforcer | Cure Period |

| CA AB 2013 | No explicit penalty

(AG enforcement) |

No | CA Attorney General | N/A |

| CA SB 942

(eff. Aug 2, 2026) |

$5,000 per violation | No | CA AG / Local authorities | N/A |

| CA SB 53 | $1,000,000 per violation | No | CA Attorney General | N/A |

| CO AI Act | Unfair trade practice

(AG action) |

No | CO Attorney General | 60 days |

| UT AIPA / SB 226 | $2,500 admin fine;

$5,000 court order |

No | UT Div. Consumer Protection | N/A |

| TX TRAIGA | Curable: $10K–$12K;

Uncurable: $80K–$200K; Continuing: $2K–$40K/day |

No | TX Attorney General | 60 days |

6.1 Key Enforcement Observations

California’s High-Stakes SB 53

SB 53’s $1,000,000-per-violation penalty cap is the highest single-violation exposure of any examined law and reflects the gravity California attributes to frontier AI governance failures. The absence of a cure period in SB 53 (unlike TRAIGA and Colorado) means a single non-compliant deployment could immediately expose a company to maximum penalties.

Texas’s Tiered and Continuing Violation Penalties

TRAIGA’s penalty structure is notable for its daily accrual mechanism: a continuing violation may accrue $2,000–$40,000 per day after the cure period expires without a submitted cure statement. For organizations with widespread AI deployments, this can rapidly compound into material financial exposure.

Colorado’s “Unfair Trade Practice” Framework

Colorado’s CAIA does not specify a numerical cap per violation but treats violations as unfair trade practices under the Colorado Consumer Protection Act. This connects AI violations to Colorado’s broader CPA enforcement machinery, which can include injunctive relief, disgorgement of profits, and recovery of attorney’s fees — in addition to civil fines.

The 60-Day Cure Period in Colorado and Texas

Both Colorado and Texas require the AG to provide notice and allow 60 days to cure before commencing enforcement. This grace period is a meaningful compliance safety valve: organizations that discover potential violations — whether through internal audits, complaints, or third-party reviews — have a viable window to remediate and document the cure before facing formal liability. This reinforces the importance of proactive monitoring and internal compliance programs over reactive enforcement management.

| BUSINESS COMPLIANCE STRATEGY

Recommended Actions for Multi-State AI Operators |

For organizations operating AI systems across the four examined states, compliance planning must be multi-dimensional, addressing both current law and the rapidly evolving legislative trajectory. The following strategic recommendations are organized by priority tier.

7.1 Immediate Priority Actions

- Complete AI System Inventory

Identify and categorize all AI systems deployed or under development within your organization. Map each system to the applicable state laws based on: the jurisdictions in which the system is used or accessible; the nature of outputs (generative, predictive, recommendation-based); user volumes; training compute; and consequential decision-making involvement. This inventory is the foundational prerequisite for every subsequent compliance action.

- Classify Under Colorado’s “High-Risk” Framework

Colorado’s CAIA creates the most operationally intensive obligations and will affect the widest range of enterprise AI applications. Determine which systems make or substantially factor into consequential decisions in Colorado’s eight covered domains. For each such system, initiate impact assessment and risk management program development immediately, as these are time-intensive compliance deliverables.

- Publish California Disclosures

Before January 1, 2026, all developers of generative AI systems available to California residents must publish AB 2013-compliant training data disclosures. Before January 1, 2026, frontier developers (>10²⁶ FLOPs) must publish SB 53-compliant Frontier AI Frameworks and transparency reports. SB 942 disclosures (for large providers) must be ready by August 2, 2026.

- Implement Utah Disclosure Mechanisms

For AI systems used in consumer transactions or regulated professional contexts in Utah, ensure that: (a) systems self-identify as AI at the outset of interactions (to claim SB 226 safe harbor); and (b) any regulated occupation deployments in high-risk interaction contexts provide proactive disclosure. This is largely a technical and UX compliance action.

- Audit Texas Deployments for Prohibited Practices

Review all AI systems accessible to Texas residents or used in Texas operations for potential TRAIGA prohibited-practice exposure. Focus on systems capable of behavioral influence, discrimination-sensitive decision-making, deepfake generation, or biometric data processing. Document legitimate business purposes and design intent for all relevant systems.

7.2 Medium-Term Compliance Program Development

Adopt a Recognized AI Risk Management Framework

Adoption of the NIST AI Risk Management Framework (NIST AI RMF) or ISO/IEC 42001 is the single highest-value compliance investment available for multi-state operators. Both Colorado and Texas explicitly provide affirmative defenses for organizations compliant with these frameworks. California’s SB 53 also references these standards in the context of frontier AI frameworks. A governance program built on NIST AI RMF provides a defensible compliance baseline that can be mapped to individual state requirements.

Develop a Multi-State AI Governance Policy Suite

Implement enterprise-level AI governance documentation including: an AI Acceptable Use Policy; an AI Risk Classification Protocol (mapping to Colorado’s high-risk definitions and Texas’s prohibited practices); AI Development Lifecycle Standards incorporating privacy-by-design and bias mitigation; a Training Data Governance Policy aligned with California AB 2013; an AI Incident Response Procedure covering SB 53 and Colorado disclosure timelines; and vendor / third-party AI due diligence standards.

Colorado Impact Assessment Infrastructure

Organizations subject to the Colorado CAIA should establish a repeatable impact assessment process. Consider deploying purpose-built tools or engaging specialized counsel for annual assessment completion. Impact assessments must be retained for three years post-deployment; document management and version control infrastructure is essential.

Consumer Rights Infrastructure

Colorado’s appeal and human review rights create significant operational requirements. Organizations relying on automated AI pipelines for consequential decisions must build: pre-decision disclosure workflows; adverse-decision notification systems; consumer appeal intake and tracking processes; and human review escalation procedures where technically feasible.

7.3 Strategic Considerations

Monitor Federal Preemption Developments

The possibility of federal legislation imposing a moratorium on state AI regulations represents the most significant exogenous variable in multi-state compliance planning. Organizations should design governance programs for modularity, enabling rapid adaptation if federal law preempts or supersedes state requirements.

Monitor Colorado 2026 Legislative Amendments

Colorado lawmakers have signaled intent to amend the CAIA during the regular 2026 legislative session. Core provisions subject to potential revision include definitions of “consequential decision,” impact assessment content requirements, and consumer disclosure obligations. Compliance teams should track amendment proposals closely and avoid over-engineering compliance to potentially changed provisions.

Leverage Texas Sandbox for Novel AI Applications

Organizations developing or testing innovative AI systems for Texas markets should evaluate the TRAIGA regulatory sandbox. The 36-month protection from licensing and regulatory authorization requirements, in exchange for sharing system information with the state, offers meaningful legal cover during development and early deployment phases.

Train Internal Stakeholders

AI governance compliance cannot be siloed in legal or compliance teams. Engineering, product, data science, and business development teams must understand the regulatory implications of AI design decisions. Implement targeted training programs covering: what constitutes a “consequential decision” under Colorado’s law; documentation practices for California’s training data and frontier AI disclosures; intent documentation for Texas compliance; and Utah’s disclosure trigger standards.

| SUMMARY RECOMMENDATION

For most multi-state AI operators, the NIST AI RMF provides the most efficient compliance anchor. Organizations that build their governance programs around this framework will satisfy affirmative defense criteria in Colorado and Texas, satisfy best-practice standards referenced in California’s SB 53, and be well-positioned to demonstrate “reasonable care” in any jurisdiction. Layer state-specific obligations (training data disclosures, consumer notices, impact assessments) as modular requirements on top of this foundation. |

| APPENDIX

Key Defined Terms Across Examined Laws |

The following table summarizes key statutory definitions across the examined laws. Definitional inconsistency is a significant source of compliance complexity for multi-state operators.

| Term | CA AB 2013 | CA SB 53 | CO AI Act | UT AIPA/SB226 | TX TRAIGA |

| Generative AI | System generating derived synthetic content (text, image, video, audio) | Foundation model >10²⁶ FLOPs | AI system that can generate derived synthetic content | System trained on data; generates human-like outputs | Machine-based system inferring from inputs to generate outputs |

| Developer | Person who designs, codes, produces, or substantially modifies an AI system | Entity that trained or initiated training of a frontier model | Entity that creates, codes, produces, or substantially modifies a high-risk AI system | Not separately defined | Persons who create, code, produce, modify an AI system |

| Deployer | N/A | N/A | Entity that deploys a high-risk AI system for consequential decisions | Not separately defined | Persons who deploy AI systems |

| High-Risk AI | Not applicable (threshold-free) | Not applicable (compute threshold) | AI making consequential decisions in 8 covered domains | “High-risk interaction”: sensitive data or significant decision | Not defined; focus is on prohibited practices |

| Consequence/Impact | N/A | Catastrophic risk (mass casualties, critical infrastructure) | Material legal/significant effect on access to services | High-risk: significant personal decisions | Intentional harm, discrimination, constitutional infringement |