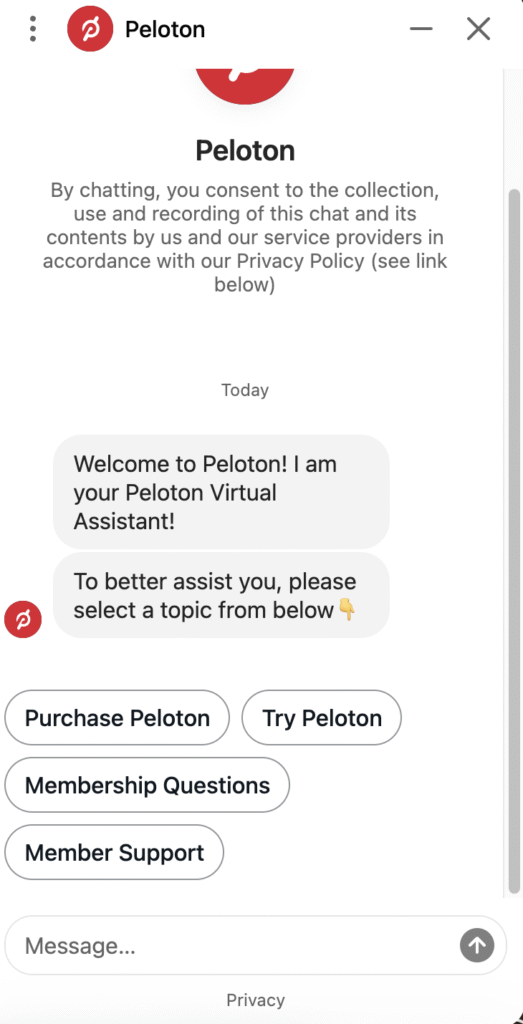

Chatbots are now core features of websites, customer support platforms, and financial services but their use for consumer data collection is under growing legal scrutiny. Recent regulations, privacy lawsuits, and multi-million dollar fines make it clear: businesses must provide clear disclosure notices when collecting customer information through chatbots, or risk serious penalties. In fact Peloton paid out almost $10 million dollars for non-disclosure from their chatbot. We work with some of the best privacy attorneys and consultants in the world who can help advise on how to get your data governance under control and we can assist with the software to automate those compliance requirements.

Why Disclosure Notices Matter

Consumer collection disclosure notices inform users that their interactions with chatbots may be recorded, analyzed, or used for purposes like service improvement, AI training, and marketing automation. They are crucial for:

-

Legal compliance with state and international data privacy laws, such as the California Consumer Privacy Act (CCPA), the Colorado AI Act, and Europe’s GDPR according to leading law firm Cooley.

-

Maintaining consumer trust by clarifying that users are interacting with a bot, not a human, and that their data may be processed or stored.

-

Preventing deception lawsuits for failing to adequately inform users of chatbot functions and data collection practices.

-

Avoiding regulator fines and privacy class actions for undisclosed or inappropriate data collection and sharing.

Disclosure Language Examples for Chatbots to Help You Avoid Expensive Lawsuits

To ensure clarity and compliance, chatbot notices should be conspicuous, plain-spoken, and tailored to the context. Here are some sample language options and disclaimers for chatbot implementations but we ask that you speak with one of our friendly privacy consultants who can do a deeper dive:

General Disclosure

“This chat is powered by an artificial intelligence system. Your messages may be stored and used to improve our services.”

Data Collection Notice

“Conversations with this bot may be recorded and analyzed. Data you provide could be used for quality assurance, service improvement, or AI training.”

Consent-Based Notice

“By proceeding, you acknowledge that your chat conversation will be shared with third-party technology providers for analysis and improvement of this service.”

Commercial Transactions

“Our automated chatbot may guide you through product or service offerings. Please be advised that your responses may be stored and used for order fulfillment or customer service. For more details, review our privacy policy.”

Disclaimers to Prevent Deception

“You are currently interacting with a virtual agent, not a live person. Please do not provide sensitive personal information unless prompted by a secure workflow.”

Customize these notices based on the type of data collected, involvement of third parties, and the legal jurisdiction you operate in.

Major Fines and Lawsuits from Non-Disclosure

Regulatory enforcement is intensifying and so are privacy lawsuits by consumers who believe their chatbot conversations were intercepted or reused without proper consent. Law firms have picked up on this and regulators are hot to raise money through privacy enforcement.

Italy’s Fine Against OpenAI

In late 2024, Italy’s privacy regulator fined OpenAI €15 million (approximately $15.6 million USD) for failing to adequately inform users about how ChatGPT collects and processes user data, including passing chat interactions for AI training purposes. The penalty followed months of investigation and marked one of the largest privacy fines levied against an AI company to date.

U.S. Lawsuits and Enforcement

Dozens of lawsuits in the U.S., including against Meta and other companies, allege illegal wiretapping, recording, and interception of chat communications through automated bots sometimes with class claims seeking hundreds of millions in damages. Many cite states’ two-party consent laws (like California’s CIPA) which require notification to all parties before chat communications are recorded or analyzed by third parties.

The Peloton Lawsuit: A Cautionary Tale

Peloton Interactive, the well-known exercise brand, recently became a centerpiece in the legal debate over chatbot disclosures and data usage. In 2023, a class-action lawsuit under California’s Invasion of Privacy Act (CIPA) accused Peloton of passing chat logs—including user messages, IP addresses, and device information—to the third-party AI firm Drift without proper customer consent.

-

The complaint argued that website users “were not notified the content of the chat was captured by Drift to be stored and analyzed,” and that chat logs were used for AI training and product enhancement, not solely for customer service.

-

Despite Peloton’s attempt to dismiss the case, the court allowed the claims to proceed, emphasizing that customers must be clearly told when third-party technology is intercepting or processing their communications.

-

The central issue: without a prominent, plain-language disclosure that third parties would access and process chat data (and possibly use it to train AI or for shareholder benefit), businesses risk violating wiretapping statutes and privacy laws. This can result in class actions, regulatory investigations, and significant reputational harm.

Best Practices for Chatbot Disclosure Compliance

To avoid legitimate lawsuits and regulatory penalties, companies should setup consent management software with Captain Compliance and follow this advice:

-

Display conspicuous disclosure notices before or at the start of any chatbot interaction.

-

Provide details on what data is collected, how it is used, and whether third parties have access.

-

Offer opt-in/opt-out choices, especially for sensitive personal or financial information.

-

Update privacy policies to include chatbot data usage.

-

Conduct regular reviews and audits of data sharing and technology vendor contracts—ensure vendors cannot use consumer data for their own enrichment without explicit consent.

-

Train staff and update technical workflow to follow disclosure and consent requirements across all chatbot platforms.

Why This Matters for Privacy & Compliance Teams

For privacy and compliance teams, disclosure notices are not optional they are a frontline defense against costly litigation and loss of consumer trust. With regulators and courts signaling that even unintentional non-disclosure can be punished harshly, businesses must treat chatbot compliance as a top priority. The Peloton case demonstrates how easily a lack of clarity can lead to drawn-out lawsuits and negative publicity. When in doubt, over-disclose rather than under-disclose.

As AI-driven chatbots become more capable and pervasive, the risks of privacy violations and non-compliance grow. Clear, robust consumer collection disclosure notices are essential legal, ethical, and brand-protecting tools. Businesses should make user transparency a key part of their chatbot experience. The cost of non-compliance can be millions in fines or worse, a damaged reputation that lasts for years.