When Algorithms Harm: Rethinking Disgorgement as a Remedy for AI Misconduct

Artificial intelligence regulation has rapidly coalesced around a familiar structure: ex ante risk assessment, documentation, and mitigation. Legislators and regulators increasingly require organizations to identify foreseeable algorithmic risks, evaluate their likelihood and severity, and implement controls designed to prevent harm before it occurs. This framework now dominates AI governance discourse in the United States and […]

Moltbot and the Privacy Risks of Agentic AI Infrastructure

For privacy professionals, Moltbot formerly known as Clawdbot (a dispute with Anthropic’s Claude made them change their name already) is not interesting because it is clever, efficient, or viral. It is interesting because it represents a structural shift in how data is accessed, processed, and acted upon by AI systems without the friction points that […]

Moltbook Privacy Alert Autonomous AI Networks

From a privacy professional’s perspective, Moltbook is not interesting because it is novel, viral, or strange. It is interesting because it exposes a structural blind spot in modern data protection regimes: autonomous, AI-to-AI environments where personal data, behavioral inference, and decision-making occur without clear human authorship, intent, or accountability. Moltbook is being described as a […]

Beyond the Protocol: The Hidden Architecture of AI Agent Memory and Power

Do we have the illusion of autonomy? This may come off as a critical analysis of governance gaps in the model context protocol era. When OpenAI’s CEO Sam Altman announced that AI agents would soon handle your driver’s license renewal, the promise was simple: convenience without complexity. But beneath this glossy narrative lies a more […]

HackerOne Introduces a Framework to Protect Good-Faith AI Research

Independent security research has long helped identify weaknesses in software before they cause widespread harm. But as artificial intelligence systems grow more complex and influential, the legal environment surrounding AI research has become increasingly uncertain. A new voluntary framework released by HackerOne seeks to address that uncertainty by defining what constitutes good-faith AI research and […]

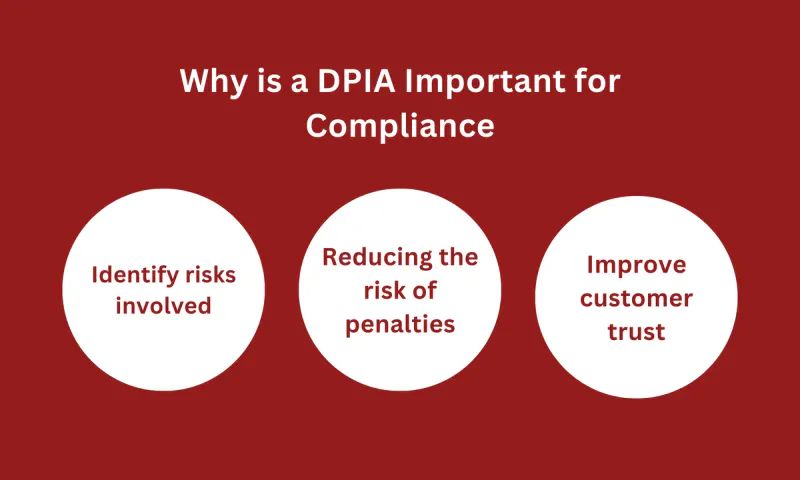

AI Risk Assessment vs. Privacy Impact Assessment

There are some essential distinctions compliance leaders must drill down on that didn’t even exist a decade ago. Starting with AI Risk assessments as a growing requirement with the sprotuing of new AI laws and regulations that are literally changing each month. Those who have dealt with PIA’s previously will find this a bit easier […]

The Convergence of Data Privacy, Cybersecurity, and Incident Response

The convergence of data privacy, cybersecurity, and incident response has evolved from a nice-to-have collaboration into an unbreakable alliance. Think of it like a high-stakes orchestra: privacy sets the score (what data can be collected, processed, and shared), cybersecurity builds the fortified stage and soundproof walls (protecting against unauthorized access and manipulation), and incident response […]

Artificial Intelligence Beyond “Normal Technology”:

Risk, Governance, and the Reconfiguration of Socio-Technical Power I. Introduction: Why “Normal Technology” Is an Incomplete Frame Recent scholarship has sought to demystify artificial intelligence (AI) by framing it as “normal technology”—a continuation of historical patterns of technological development rather than a civilizational rupture. This reframing performs an important corrective function. It counters exaggerated claims […]

How the Legal Basis for AI Training Is Framed in Data Protection Guidelines: A Multi-Jurisdictional Doctrinal Analysis

I. The rapid expansion of artificial intelligence (AI) systems trained on vast quantities of personal data has placed unprecedented strain on the legal foundations of data protection law. While much scholarly and regulatory attention has focused on issues of transparency, accountability, and downstream harms, a more foundational question remains insufficiently resolved: on what lawful basis […]

Ofcom Investigates AI Companion Chatbot Service Under UK Online Safety Rules

Ofcom has opened a formal investigation into an AI companion chatbot service operated by Novi Ltd, signaling that the UK’s Online Safety Act regime has moved decisively into active enforcement against AI-driven products. The regulator’s focus is whether the service has implemented highly effective age assurance measures to prevent children from accessing pornographic or otherwise […]