Schubert Jonckheer & Kolbe LLP, Edlesberg Law out of Aventure, Florida, and 3 other plaintiffs firms are investigating a data breach that led to unauthorized access to the sensitive information of individuals affiliated with Mercor.io. Below is a detailed breakdown of the scandal that ties in GRC audit startup Delve where we covered their SOC 2 scandal in detail and now we’re seeing the fall out from such actions.

Paper Shields and Stolen Data: How a Fake Compliance Empire and a 40-Minute Supply Chain Attack Left 40,000 People Exposed — and Silicon Valley Facing a Legal Reckoning

The Convergence

There are two Silicon Valley scandals unfolding simultaneously in the spring of 2026. On the surface, they appear unrelated. One involves a $10 billion AI hiring startup whose contractor database was breached in a sophisticated supply chain attack, exposing the Social Security numbers, biometric data, and video interviews of more than 40,000 people. The other involves a $300 million Y Combinator darling accused of running what a whistleblower calls “fake compliance as a service” — fabricating SOC 2 audit reports, generating pre-written conclusions before a single piece of evidence was reviewed, and routing clients through rubber-stamp certification mills masquerading as independent auditors.

They are not two separate scandals. They are one.

The Mercor Lawsuit Bombshell: A $300M Compliance Fraud, a 40-Minute Hack, and 40,000 Victims Who Never Saw It Coming

TechCrunch described the convergence as “Silicon Valley’s two biggest dramas intersecting.” The Mercor breach collided with an already-unfolding scandal involving Delve Technologies, the GRC automation startup that had issued LiteLLM’s SOC 2 and ISO 27001 compliance certifications. What emerged from that collision is a case study in systemic failure — of supply chain security, of compliance integrity, and of the regulatory frameworks meant to protect the people whose most sensitive data sits at the center of it all. The legal fallout has only just begun.

Part I: The Breach — 40 Minutes That Exposed Everything

The Target

Mercor was founded by three Thiel Fellows in 2022. By October 2025, it had raised $350 million in Series C funding at a $10 billion valuation. The company operates as an AI talent marketplace, connecting subject matter experts — doctors, lawyers, scientists, engineers — with AI labs that need data labeling and model training work. Mercor works with leading companies such as OpenAI, Anthropic, Meta, and Google. That client roster is not merely impressive; it is legally significant. It means that what was stolen from Mercor’s systems was not just contractor data — it was the operational substrate of the AI industry’s most sensitive research pipelines.

The Attack Vector

The attack that reached Mercor originated several steps upstream. A threat actor group known as TeamPCP compromised the CI/CD pipeline of LiteLLM, an open-source Python library used by millions of developers to connect applications to AI services, with 97 million monthly downloads and a presence in an estimated 36% of cloud environments. TeamPCP had earlier used a supply chain attack on Trivy, a widely used security scanner, to obtain credentials belonging to a LiteLLM maintainer.

On March 27, 2026, the group used those stolen credentials to publish two poisoned versions: 1.82.7 and 1.82.8. Both were live on PyPI for about 40 minutes before being pulled. Forty minutes was enough. The packages contained credential-stealing code. Any company that auto-installed the update during that window was at risk.

The payload was sophisticated. Version 1.82.7 embedded base64-encoded malware directly into the library’s proxy server code, executing on import. From there, the malware harvested API keys, tokens, and cloud credentials. Attackers then moved through Mercor’s systems and reached Slack, ticketing tools, source code, and the contractor database. The hacker group Lapsus$ posted a sample of the data. Security researchers believe they worked with TeamPCP.

What Was Stolen –

The breach exposed the personal data of 40,000+ contractors, proprietary source code, video interviews, and potentially the AI training methodologies of multiple frontier labs. The leaked database holds full names, email addresses, work history, and Social Security numbers for many U.S. contractors. It also holds video footage of interviews — faces, voices, screen shares. For some users, ID documents like passports were stored too.

The Lapsus$ cybercriminal group listed Mercor on its leak site and claimed to possess four terabytes of stolen data. On March 31, 2026, Mercor confirmed the supply chain attack to employees and to publications such as TechCrunch. Critically, Mercor has not reported the supply chain attack to state attorney general offices, which may have violated federal or state laws. For compliance professionals, that disclosure failure is not a footnote — it is a separate and potentially compounding legal exposure.

The Institutional Fallout

Meta, which signed a $27 billion AI infrastructure deal with Nebius Group in March 2026 and has forecast capital expenditures of between $115 billion and $135 billion for the year, has indefinitely paused all work with Mercor. OpenAI started its own review. Anthropic has not publicly commented on its exposure. Google is understood to be assessing the breach’s scope. The silence of all four companies speaks to the strategic sensitivity of what may have been compromised. When multiple competitors rely on the same third-party data supplier, a single breach can expose the competitive secrets of all of them at once.

Part II: The Lawsuits — Five Cases in Seven Days: Five Lawsuits in Seven Days: How Mercor’s Billion-Dollar Empire Crumbled on the Back of Fraudulent Audits and Stolen Data

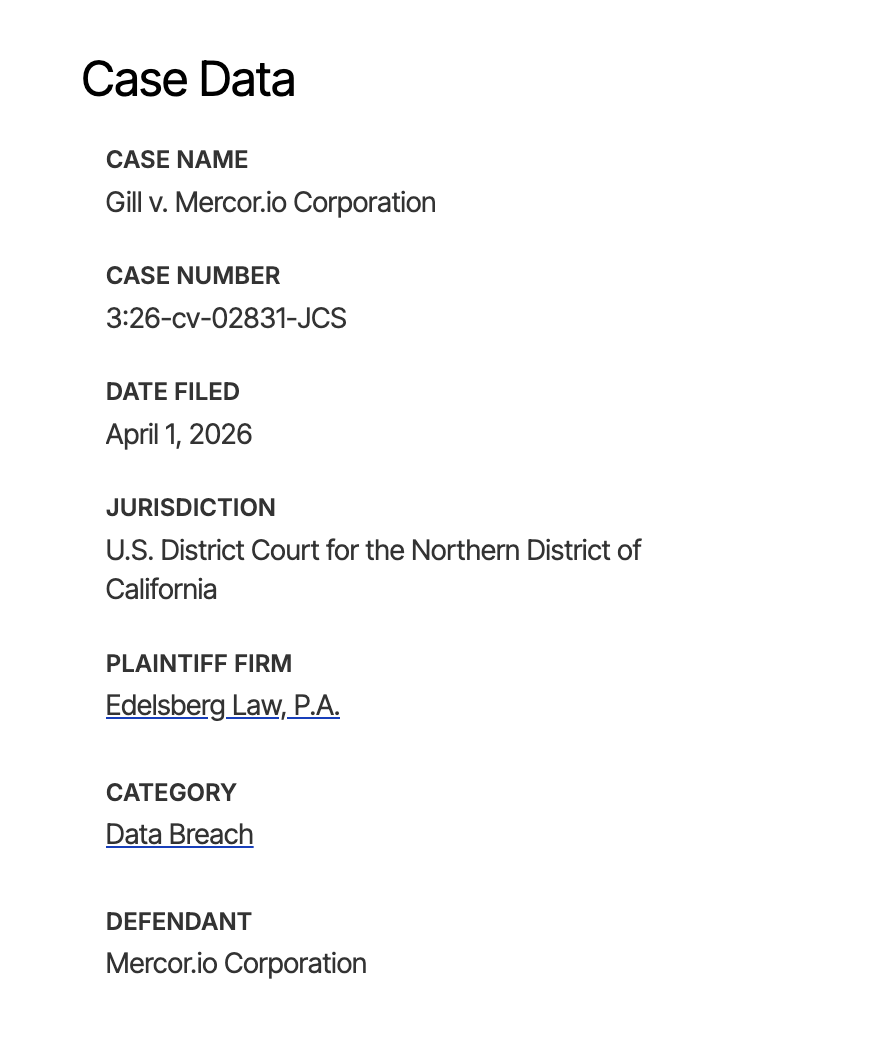

The Class Action: Gill v. Mercor.io Corporation

Plaintiff Lisa Gill filed a class action lawsuit in the U.S. District Court for the Northern District of California on April 1, 2026. The suit claims Mercor failed to maintain basic cybersecurity protections, leaving current and former contract employees and customers vulnerable to identity theft and fraud.

The complaint’s allegations of security failure are sweeping and specific. It claims Mercor did not implement multifactor authentication; failed to encrypt sensitive data during storage and transmission; did not limit which employees or systems could access sensitive personal information; did not monitor its systems for unusual or suspicious activity; and did not rotate passwords regularly to reduce the risk of attackers exploiting old or stolen credentials. If proven, these failures do not merely constitute negligence — they represent a systematic disregard for industry-standard controls that the company was presumably representing to clients it had in place.

The lawsuit asks the court to award compensatory damages to cover actual losses, as well as consequential, statutory, nominal and punitive damages. The proposed class covers all U.S. residents whose personal information unauthorized parties accessed or acquired during the breach.

The legal theories at play are multiple and layered: the suit claims invasion of privacy, theft of personal information, reduced value of personal data, lost time and a rise in unwanted spam communications. It also alleges loss of the benefit of the bargain — the legal concept referring to the idea that users gave their data to Mercor with a reasonable expectation of security that the company reportedly did not deliver.

The Contractor Suits: Too Big to Be Safe: The Mercor Lawsuits Reveal How a $10 Billion AI Darling Gambled With 40,000 People’s Most Sensitive Data — and Lost

Contractors filed five lawsuits against Mercor in federal courts in California and Texas in the week of April 1–7, 2026, accusing the company of violating data privacy and consumer protection laws.

Among them, a lawsuit filed by NaTivia Esson alleges she worked for Mercor from March 2025 to March 2026 and filled out a W-9 form with her personal identifying information each time she got work. She “trusted the company would use reasonable measures to protect it.” Her complaint states: “Because of the data breach, plaintiff anticipates spending considerable amounts of time and money to try and mitigate her injuries.”

These individual suits are significant beyond their dollar amounts. They establish a concrete factual record of contractor reliance and trust — the kind of record that, in aggregate, strengthens class certification arguments and undermines any defense that harm was speculative or unforeseeable.

The Critical Suit: Naming Delve

The most legally consequential filing in the entire cluster is the one that widens the circle of defendants. One suit against Mercor also names Berrie AI and Delve Technologies — an “automated compliance” firm that had previously certified Berrie’s compliance with certain industry standards — as defendants. One of the suits seeks to hold LiteLLM, security audit firm Delve, and others liable.

This filing is the thread that ties the two scandals together into a single legal narrative. If Delve issued a fraudulent SOC 2 certification to LiteLLM or Berrie AI — certifying the existence of security controls that were never implemented — and that fraudulent certification was relied upon by companies like Mercor in their vendor due diligence, then Delve’s liability exposure extends far beyond its direct clients. It potentially encompasses every company whose data was downstream of a Delve-certified vendor.

Part III: The Delve Scandal — Compliance Theater at Scale

The Origin Story

Delve was founded in 2023 by Gen-Z entrepreneurs Karun Kaushik and Selin Kocalar — 21-year-old MIT dropouts, Forbes 30 Under 30 honorees, Y Combinator Winter 2024 graduates. In January 2026, Kocalar told Inc. that Delve had over 1,000 customers in over 50 countries and had helped those clients land “nine-figure deals and federal contracts.” In July 2025, the company raised $32 million at a $300 million valuation led by Insight Partners.

The pitch was seductive: Delve said it leverages AI to automate the process of obtaining security and regulatory certifications, including SOC 2, HIPAA, and GDPR — standards that govern data security, health information privacy, and European data protection. It claimed to compress compliance certification timelines from months to days, with pricing as low as $6,000–$15,000 for bundled SOC 2, ISO 27001, and HIPAA certifications — a fraction of traditional Big Four audit costs.

For cash-strapped startups under pressure to hit compliance checkboxes before enterprise sales conversations, Delve seemed to offer something extraordinary. As it turned out, what it was offering may have been something different altogether.

The Whistleblower Breaks

The story broke in mid-March via an anonymous Substack writer, “DeepDelver,” in a post titled “Delve – Fake Compliance as a Service – Part I,” published around March 18–20, 2026. Key evidence came from a late-2025 data leak: a publicly accessible Google Spreadsheet containing direct links to hundreds of confidential draft audit reports.

The statistical findings from that spreadsheet are damning in their precision. Analysis of 494 SOC 2 reports and 259 Type II reports revealed pre-written conclusions: auditor reports and test results were fully populated before clients submitted their company descriptions, network diagrams, or any evidence — directly violating AICPA independence rules. Identical templates: 493 out of 494 SOC 2 reports — 99.8% — used the exact same boilerplate text, including the same grammatical errors and nonsensical descriptions. Only the company name, logo, and signature changed. Fabricated evidence: the platform auto-generated “passing” documentation — board meeting minutes with placeholders, risk assessments with default 10 risks, fake evidence artifacts.

The whistleblower said the platform undermined the core safeguard of SOC 2: independent validation. According to the allegations, audit conclusions were generated before observation periods ended, and controls were marked effective despite missing evidence for access reviews, logging, or incident response testing.

The Auditor Problem

DeepDelver claimed that virtually all of Delve’s clients were routed through two audit firms, Accorp and Gradient, which they described as “part of the same operation,” operating primarily in India with only a nominal presence in the United States — and just rubber-stamping reports generated by Delve.

The architecture is worth understanding in detail. Delve marketed itself as using “US-based auditors.” In practice, the investigation found that 99%+ of client audits were routed through these two firms. The independence and professional licensing questions raised by this arrangement are significant. Under AICPA standards, SOC 2 audits must be conducted by independent Certified Public Accountants. If the auditors were neither independent nor the actual authors of the conclusions attributed to them, the certifications they issued are not merely questionable — they may be void.

DeepDelver said the startup “inverts” the normal compliance structure: “By generating auditor conclusions, test procedures, and final reports before any independent review occurs, Delve places itself in the role of both implementer and examiner.”

The Internal Evidence

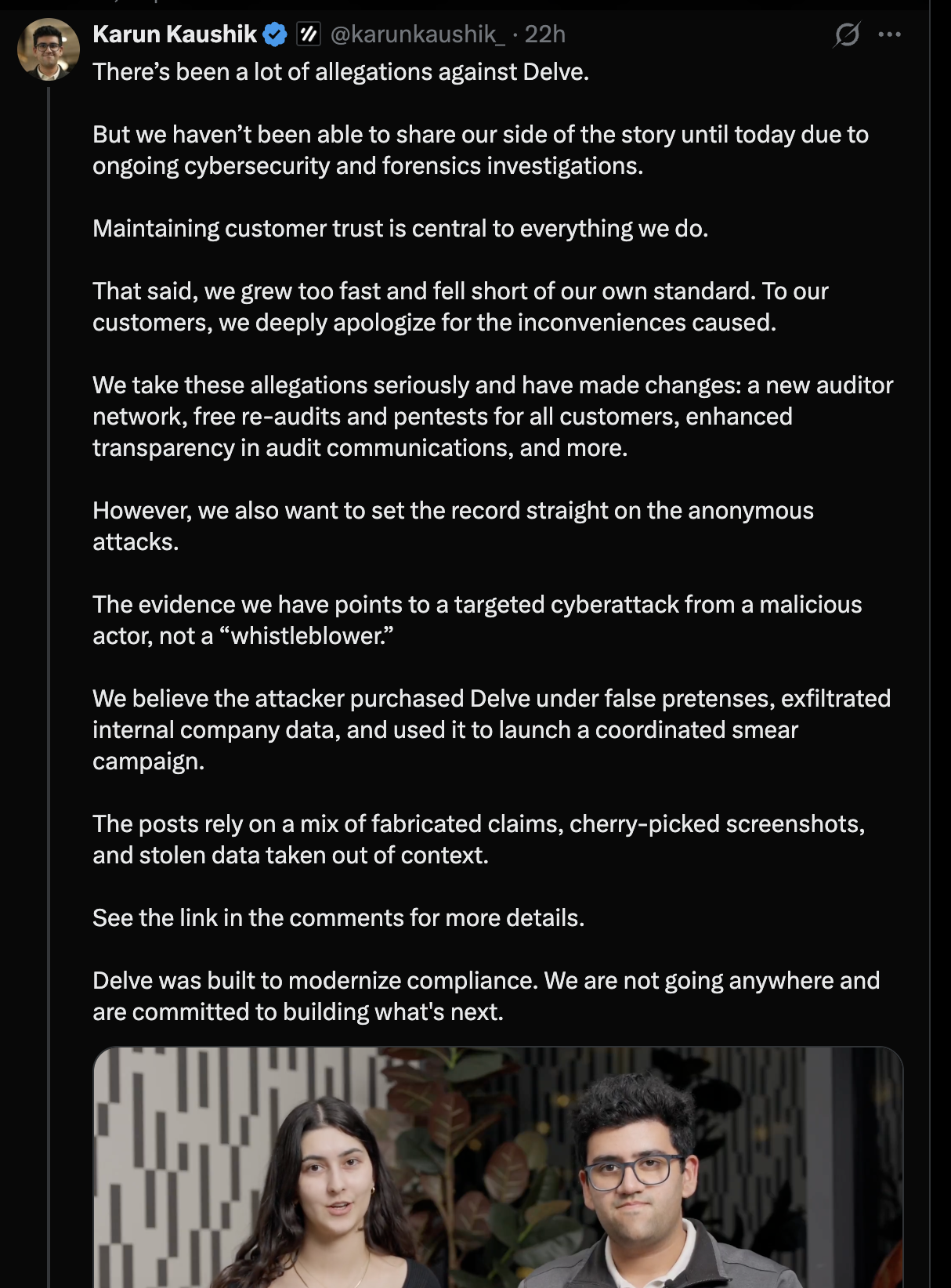

On March 28, 2026, a whistleblower — a Delve employee — delivered to DeepDelver a substantial dump of internal screenshots, videos, and recorded conversations. The most legally significant content is a recorded conversation between CEO Karun Kaushik and an employee named Ross, in which Kaushik explicitly questions whether the audit partner reviewed any evidence — and then expresses comfort with that arrangement.

Internal screenshots show a channel called “Project Audit Automation” with active internal participation. A separate screenshot shows an internal tool described as “Selin’s Report Generator” — named after co-founder Selin Kocalar. This internal evidence directly contradicts Delve’s public defense, which rested entirely on the claim that it was a template provider, not a report generator.

The most damning legal implication: Delve’s own CEO acknowledged in internal documents that the platform could not deliver what the Series A pitch deck claimed — during the period in which that funding was being raised. That timing opens the door to potential securities fraud exposure, not merely consumer protection violations.

The IP Theft Allegation

New reporting from TechCrunch reveals that Delve allegedly sold a no-code tool built on an unlicensed fork of a competitor’s open-source product — to a prospect who turned out to be the very whistleblower now bringing the company down. During a sales pitch, Delve demonstrated a no-code agent-building tool it called “Pathways.” The prospect recognized it immediately as a strong resemblance to Sim.ai’s open-source agent-building product, SimStudio. When asked directly, Delve’s team said they built it themselves. That answer appears to have been incorrect.

The Institutional Collapse

On or around April 3, 2026, Y Combinator removed Delve from its companies directory and asked the founders to leave the program. A leaked internal Bookface chat from YC CEO Garry Tan reportedly stated: “We have asked Delve to leave YC. YC is a community, not just an accelerator. The founders in our community have to trust each other, and we have to trust each other. When that trust breaks down, there’s really only one thing to do.”

Insight Partners quietly scrubbed its investment thesis article — titled “Scaling AI-native compliance: How Delve is saving companies time and money on compliance busywork” — from its website, suggesting that investors may be distancing themselves from the company. Affected companies include well-known AI startups as well as NASDAQ-traded firms, some of which process the protected health information of millions of Americans.

Part IV: The Legal and Regulatory Intersection

What Compliance Professionals Must Understand Now

The Mercor-Delve nexus presents one of the most instructive case studies in recent memory for legal and compliance practitioners. Several critical lessons demand immediate attention.

The Certification Chain of Liability. When a company relies on a vendor’s SOC 2 certification as part of its vendor due diligence — and that certification is fraudulent — it does not necessarily insulate the relying company from liability. Courts and regulators will ask: what steps did you take to verify the certification’s authenticity? Were there red flags? Was the price suspiciously low? Was the turnaround suspiciously fast? If a tool promises full SOC 2 in days or weeks with minimal work, the failure to demand proof of auditor independence and real testing methodology constitutes a due diligence failure that companies will struggle to defend.

The Disclosure Clock Is Already Running. Mercor has not reported the supply chain attack to state attorney general offices, which may have violated federal or state laws. Under breach notification laws including California’s CCPA, Illinois’ BIPA, and numerous state statutes covering Social Security numbers and biometric data, the obligations to notify affected individuals and regulators are triggered by discovery of a breach — not by a company’s internal assessment of its severity. Mercor’s disclosure gap may represent independent statutory violations layered on top of the underlying breach.

Third-Party Auditor Liability. The suit that names Delve as a defendant introduces a theory that compliance professionals should watch closely: that a GRC platform issuing fraudulent certifications may bear direct legal liability to downstream victims of breaches at companies that relied on those certifications. This theory, if it survives, would fundamentally alter the economics of the compliance automation industry — and the standard of care owed by auditors and their platforms.

The AICPA Exposure. Delve’s conduct, if proven, may also trigger professional disciplinary action against the individual CPAs who signed audit opinions they did not independently generate. The trust page problem — publishing compliance claims about vulnerability scans and penetration tests before that work had actually been performed — is not merely a marketing misrepresentation. It is potentially fraudulent inducement of the enterprise contracts that Delve’s customers used those trust pages to close.

HIPAA and GDPR Exposure Are Real. The scandal potentially exposes Delve’s customers to criminal liability under HIPAA and hefty fines under GDPR. Any company that used a Delve SOC 2 or HIPAA certification to represent its security posture to covered entities, business associates, or European data subjects — and that certification was fraudulent — faces the prospect of regulatory action based on representations that were never true.

Mercor-Delve Compliance Disaster – 5 Class Actions and Growing

What makes the Mercor-Delve story so significant for the legal and compliance community is not that a company was breached, or that a startup allegedly cut corners. Both of those things happen. What makes it significant is the way these two failures interlocked — and the degree to which the entire system of trust that compliance certifications are meant to create was exposed as potentially hollow.

The Mercor breach is not a single-point failure but a systems failure spanning three intersecting domains: open-source software supply chain security, compliance certification integrity, and AI infrastructure governance.

The five lawsuits filed in a single week are the opening act. The regulatory investigations — from state AGs, from the FTC, potentially from the SEC given the investor fraud allegations — are likely to follow. The question of whether Delve’s fraudulent certifications created a foreseeable chain of harm that runs all the way to the 40,000 individuals whose data is now circulating on dark web forums will be litigated for years.

For legal and compliance professionals advising clients in the AI supply chain, the immediate action items are clear: audit every third-party vendor certification your organization relied upon, verify auditor independence directly and in writing, review your breach notification obligations now rather than after regulators come asking, and treat any compliance certification whose provenance you cannot fully verify as no certification at all.

The paper shield has been torn down. What stands behind it is not security — it is liability.