Varonis’ agreement to acquire AllTrue for roughly $125 million in cash is a small deal by headline standards, but it’s a clean signal investors and PE should not ignore: the AI security budget is rapidly consolidating around platforms that can govern agentic access to enterprise data, not just secure endpoints, networks, or prompts.

AllTrue positions itself in the emerging AI trust, risk, and security management (TRiSM) layer—visibility into where AI is used, which models/agents are running, what data they can reach, and how that activity should be monitored and controlled. Varonis, historically data-centric security, is effectively buying “AI runtime governance telemetry” to sit above its core strength: discovering sensitive data and enforcing least-privilege access around it.

For an investor lens, the key takeaway is not the $125M price tag. It’s the pattern: buyers are paying to close “AI control plane gaps” across data security posture management (DSPM), identity, SASE, and cyber resilience—because AI agents increase the speed and blast radius of mistakes and misuse.

Why Varonis Bought AllTrue: The Agent Problem, Not the Chatbot Problem

Enterprises are moving from “employees use AI tools” to “AI agents do work.” That shift reframes security: every agent becomes a privileged identity that can query systems, pull data, and trigger workflows at machine speed. Traditional controls (IAM reviews, static permissions, periodic audits) are too slow and too human-centric for this model.

AllTrue’s value proposition is squarely in runtime oversight: detect AI usage, log and alert on risky behavior, test AI systems for vulnerabilities like prompt injection/jailbreaks, and generate compliance-oriented reporting. In other words: AI Detection & Response plus guardrails and auditability.

Varonis’ strategic logic is straightforward: it already focuses on the data and permissions substrate. By adding a TRiSM layer, it can monitor when AI tools and agents touch that substrate—and give security teams an actionable “who/what/why” trail when models start behaving like superusers.

The Bigger Story: AI Governance Has Become a Core M&A Motif

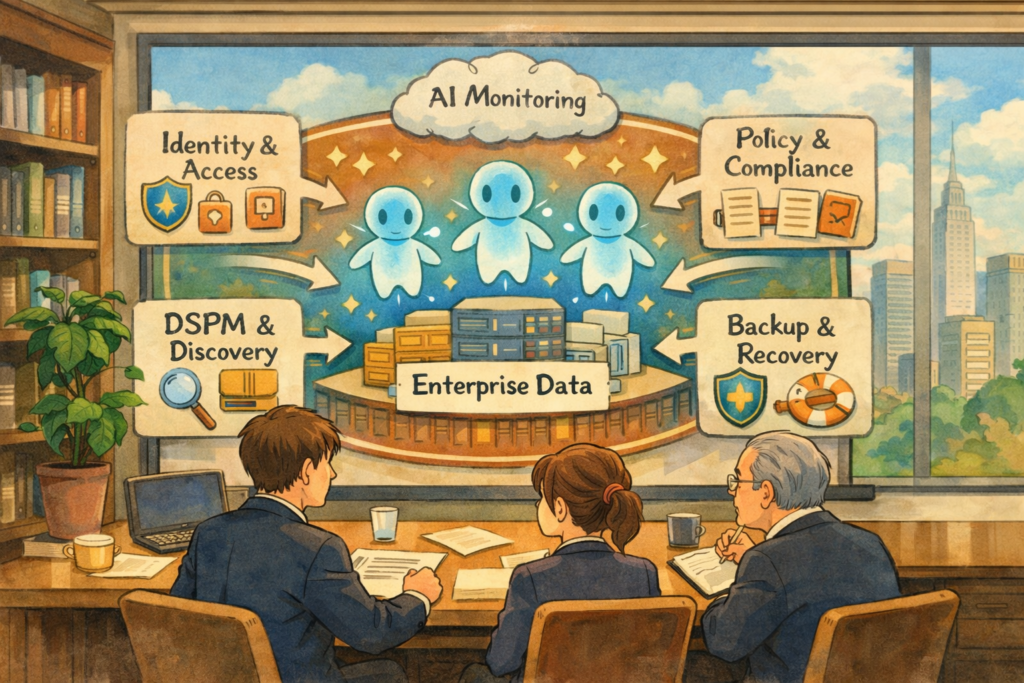

Varonis–AllTrue is best understood as one node in a larger consolidation wave where incumbents are buying adjacent AI security capabilities to assemble an end-to-end posture: discover data → control access → monitor AI use → enforce policy → recover/rollback.

A standout example is Veeam’s $1.725B acquisition of Securiti AI, which explicitly fused data resilience (backup/recovery) with DSPM, privacy/governance, and “AI trust” positioning. That’s a dramatic reframing of what “recovery” means in an AI era: not only restoring files after ransomware, but also restoring and proving the integrity of data feeding AI pipelines.

On the identity side, CrowdStrike’s move to acquire SGNL (announced January 2026) targets continuous, risk-based authorization—granting and revoking access dynamically for humans, non-human identities, and AI agents. The thesis: “standing privilege” doesn’t work when agent workflows spin up and down continuously across SaaS and cloud layers.

And at the network/SASE layer, Cato Networks’ acquisition of Aim Security (September 2025) highlights how buyers want AI interaction controls to live where traffic and policy already converge—protecting public/private AI apps and agent workflows at the edge of enterprise access.

What Deals Are Really Buying: A Converging “AI Control Plane”

Across these transactions, the market is converging on a pragmatic truth: AI risk is mostly data + identity + observability, with governance as the glue. That’s why “AI security” M&A rarely looks like one pure-play category; instead it stitches together capabilities that were previously sold as separate tools.

Platform buyers are emphasizing three non-negotiables: (1) real-time visibility into AI usage and data access, (2) policy enforcement at the moment of access (not after), and (3) resilience/rollback when things go wrong—whether the incident is ransomware, data poisoning, leakage, or misconfigured agent permissions.

One practical implication for investors: the winners are less likely to be “best prompt-filter vendor” and more likely to be companies that attach to core enterprise control points (identity providers, data stores, backup layers, SASE gateways). This is why acquisitions are clustering around DSPM, identity authorization, and runtime enforcement (guardrails).

Representative transactions and what they imply: F5’s $180M acquisition of CalypsoAI explicitly framed “AI guardrails” as a runtime perimeter—protecting models/agents and their interactions. That is less about training governance and more about operational security for inference and agent execution.

Similarly, Commvault’s acquisition of Satori added structured data controls and LLM monitoring into a resilience platform—another example of resilience vendors moving “up the stack” into AI governance and data access enforcement.

Regulatory Gravity: Why “Governance” Is Now a Buy Box

Even for US-heavy buyers, the governance story is being pulled by a patchwork of requirements and expectations: voluntary frameworks like NIST’s AI Risk Management Framework provide a common language for risk controls and oversight, while broader federal policy shifts continue to evolve. This matters because acquirers (and underwriters) increasingly want “audit-ready” artifacts: inventories of AI use, documented controls, monitoring evidence, and incident response hooks.

For investors, governance features show up as reduced friction in regulated pipelines (financial services, health, critical infrastructure) and as a hedge against the “unknown unknowns” of AI incidents—especially when agentic workflows blur the line between user action and automated execution. That is why deals keep referencing compliance reporting, policy enforcement, and visibility as primary value drivers.

The M&A Themes Investors Should Underwrite

- DSPM + resilience is becoming a combined thesis: Veeam–Securiti AI shows data recovery vendors moving toward continuous discovery/classification, governance, and AI trust capabilities.

- Identity is expanding to “every agent is privileged”: CrowdStrike–SGNL spotlights continuous authorization as a core requirement for human, non-human, and AI identities.

- SASE/security edge vendors are buying AI interaction controls: Cato–Aim is a blueprint for embedding AI guardrails where access and policy already live.

- Runtime guardrails are entering mainstream platforms: F5–CalypsoAI frames “inference perimeter” protections and guardrails as a standard enterprise requirement.

- TRiSM is getting absorbed into data-centric security: Varonis–AllTrue indicates TRiSM features will increasingly be bundled into broader data security platforms, not bought standalone.

Due Diligence: The Questions That Decide Whether This Category Compounds

In this cycle, investors should pressure-test targets (and roll-up strategies) on product reality, not just “AI governance” language. The best deals will create measurable outcomes: fewer sensitive data exposures, better permission hygiene for agent workflows, faster detection of anomalous AI behavior, and credible incident response/rollback paths.

- Control-point integration: Does the product integrate with real enterprise choke points (IdP/IAM, SaaS authorization layers, data stores, SASE gateways, backup layers), or does it live as a dashboard with limited enforcement?

- Runtime enforcement vs. documentation: Can it block/modify behavior in-line (guardrails, just-in-time access, policy-based controls), or does it primarily produce reports after the fact?

- AI inventory quality: Can it reliably discover where AI is used (agents, models, APIs, shadow AI) and map that usage to identities and data access paths?

- Unit economics and attach motion: Is this sold as a standalone SKU with high churn risk, or does it attach to a platform with durable expansion (identity, data security, resilience)?

- Proof under incident conditions: During a real event (leakage, prompt injection, rogue agent, poisoning), does the tool shorten time-to-detect/contain and improve audit evidence?

- Regulatory readiness outputs: Can the platform generate artifacts aligned to common expectations (e.g., AI risk inventories, monitoring logs, control attestations) so regulated buyers can clear procurement?

Security, Data Governance, and Auditability

In a stack that’s increasingly blending security, data governance, and auditability, Captain Compliance complements these acquisitions as a governance/trust-center/privacy-ops layer—helping organizations operationalize policies, disclosures, and evidence across privacy and AI oversight without replacing the core security control points.

What This Cycle Looks Like in 12–24 Months

Expect more “capability grafting” deals where incumbents acquire small, technically differentiated teams to close AI governance gaps—particularly in (a) continuous authorization for agents, (b) DSPM with real enforcement, and (c) AI runtime guardrails that sit close to production inference. The strategic buyers are building suites that can justify a larger, consolidated platform spend rather than competing for experimental “AI risk” line items.

Varonis–AllTrue is emblematic: it’s not buying hype; it’s buying the missing layer that turns “data-centric security” into “AI-ready security.” For investors and PE, the opportunity is not merely backing “AI security” as a slogan—it’s underwriting products that attach to the enterprise’s most defensible control planes and generate the evidence and enforcement that agentic workflows will demand.